Techno-Narcissism

Techno-Narcissism

Audio Recording by George Hahn

I’m at Founders Forum in the Cotswolds … which they assure me is somewhere outside of London. There are a lot of Teslas and recycled Mason jars as … we’re making the world a better place. As at any gathering of the tech elite in 2023, the content could best be described as AI and the Seven Dwarves. The youth and vision toggles between inspiring moments and bouts of techno-narcissism. It’s understandable. If you tell a thirtysomething male he is Jesus Christ, he’s inclined to believe you.

The tech innovator class has an Achilles tendon that runs from their heels to their necks: They believe their press. Making a dent in the universe is so aughts. Today, membership in the Soho House of tech requires you to birth the leverage point that will alter the universe. Jack Dorsey brought low-cost credit card transactions to millions of small vendors. But he’s still not our personal Jesus, so he renamed his company Block and pushed into crypto, because bitcoin would bring “world peace.” Side note: If anybody knows @jack’s brand of edibles, Whatsapp me. Elon Musk made a great electric car, then a better rocket … and recently appointed himself Noah to shepherd humanity to an interplanetary future.

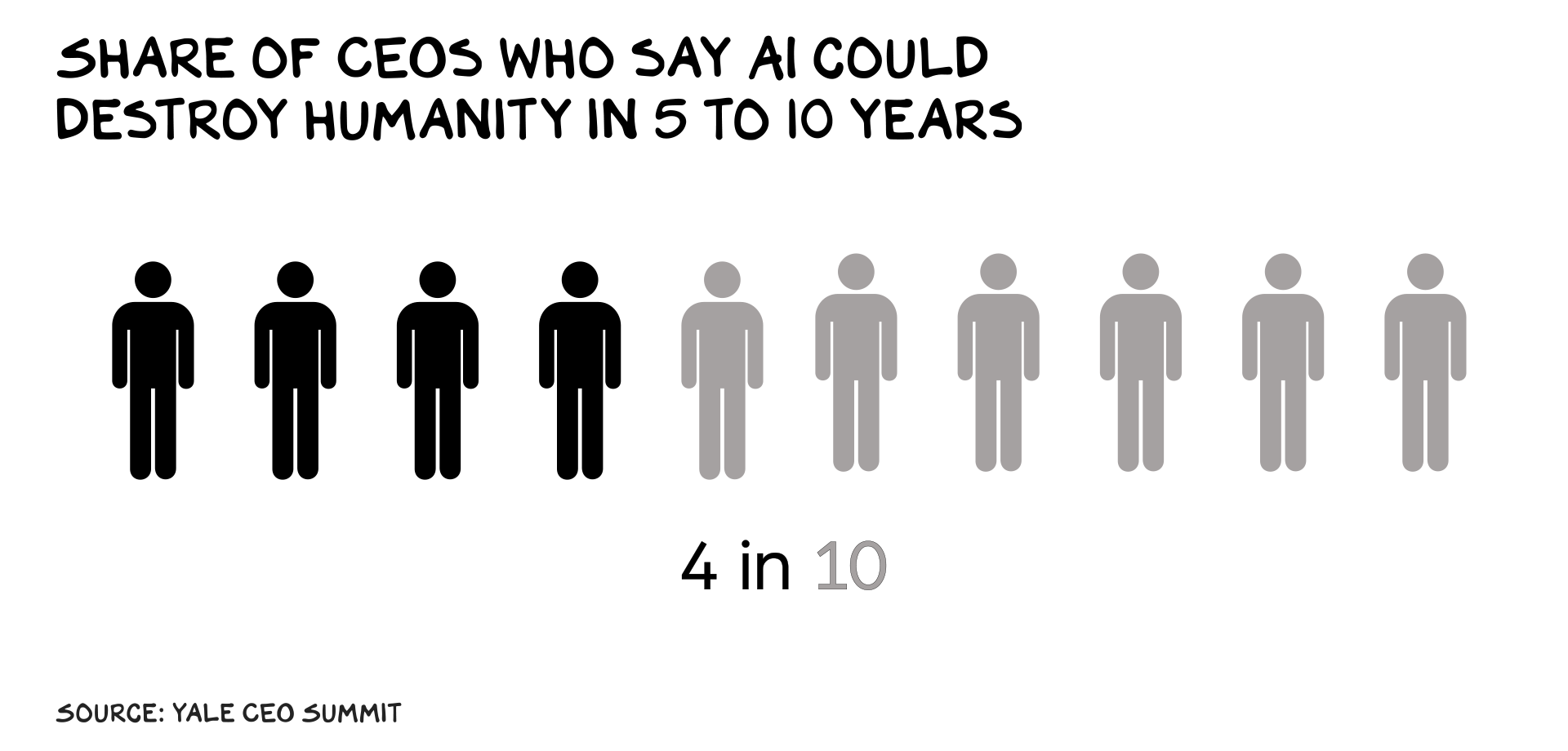

When techno-narcissism meets technology that is genuinely disruptive (vs. crypto or a headset), the hype cycle makes the jump to lightspeed. Late last year, OpenAI’s ChatGPT reached 1 million users in five days. Six months later, Congress asked its CEO if his product was going to destroy humanity. In contrast, it took Facebook 10 months to get to a million users, and it was 14 years before the CEO was hauled before Congress for damaging humanity.

The claims are extreme, positive and negative: Solutionists pen think pieces on “Why AI Will Save the World,” while catastrophists warn us of “the risks of extinction.” These are two covers of the same song: techno-narcissism. It’s exhausting. But AI is a huge breakthrough, and the stakes are genuinely high.

Existential Egos

It’s notable today that many of the outspoken prophets of AI doom are the same people who birthed AI. Specifically, taking up all the oxygen in the AI conversation with dystopian visions of sentient AI eliminating humanity isn’t helpful. It only serves the interests of the developers of nonsentient AI, in several ways. At the simplest level, it gets attention. If you are a semifamous computer engineer who aspires to be more famous, nothing beats telling every reporter in earshot: “I’ve invented something that could destroy the world.” Partisans complain about the media’s left or right bias, but the real bias is toward spectacle. If it bleeds it leads, and nothing bleeds like the end of the world with a tortured genius at the center of the plot.

Land Grab

AI fearmongering is also a business strategy for the incumbents, who’d like the government to suppress nascent competition. OpenAI CEO Sam Altman told Congress we need an entire new federal agency to scrutinize AI models, and said he’d be happy to help them define what kinds of companies and products should get licenses (i.e., compete with OpenAI). “Licensing regimes are the death of competition in most places they operate,” says antitrust scholar and former White House senior adviser Tim Wu (total gangster). Similar to cheap capital and regulatory capture, catastrophism is an attempt to commit infanticide on emerging competition.

Real Risks

Granted, we should not ignore the dangers of AI, but the real risks are the existing incentives and amorality in tech and our ongoing inability to regulate it. The techno-catastrophists want to create a narrative that the shit coming down the pike is not the result of their actions, but the inevitable cost of progress. Just as the devil’s trick was convincing us he didn’t exist, the technologist’s sleight of hand is to absolve himself of guilt for the damage the next generation of tech leaders will levy on society.

This has been social media’s go-to for years, obfuscating their complicity in teen depression or misinformation behind a facade of technical complexity. It’s effective. Dr. Frankenstein, having lost control of Frank, should have warned “I’m worried Frank is unstable and unstoppable,” so when his monster began tossing children into lakes, he could shrug his shoulders and claim, “I told you so.”

To be clear, the monster is in fact a nondomesticated animal — it’s unpredictable. The techno-solutionists promising the end of poverty and more avocado toast, if we would just get out of their way, are not to be trusted either. AI sentience is a stretch, but there are plausible paths to horrific outcomes. Even “dumb” computer viruses have caused billions of dollars in damage.

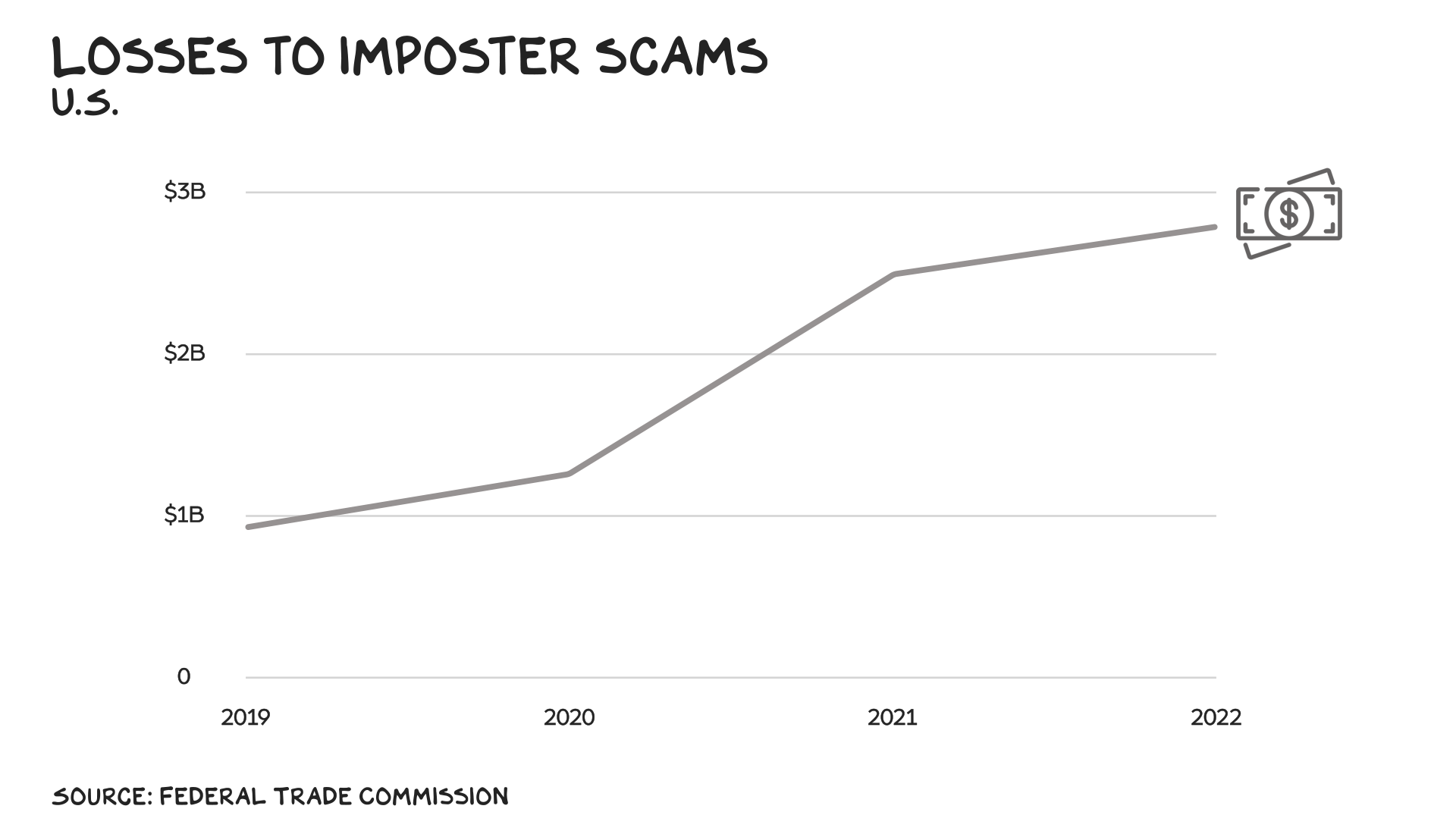

Light Beer

My first thought, after witnessing all the hate manifest around a light beer, was that we’ll likely see AI-generated deepfakes of woke commercials produced by brands, for other brands, to inspire boycotts by extremists. As Sacha Baron Cohen said: “Democracy is dependent on shared truths, and autocracy on shared lies.” AI, like the products of many of our Big Tech firms, could widen and illuminate the path to autocracy. On a more pedestrian level, we’re going to witness a tsunami of increasingly sophisticated scams.

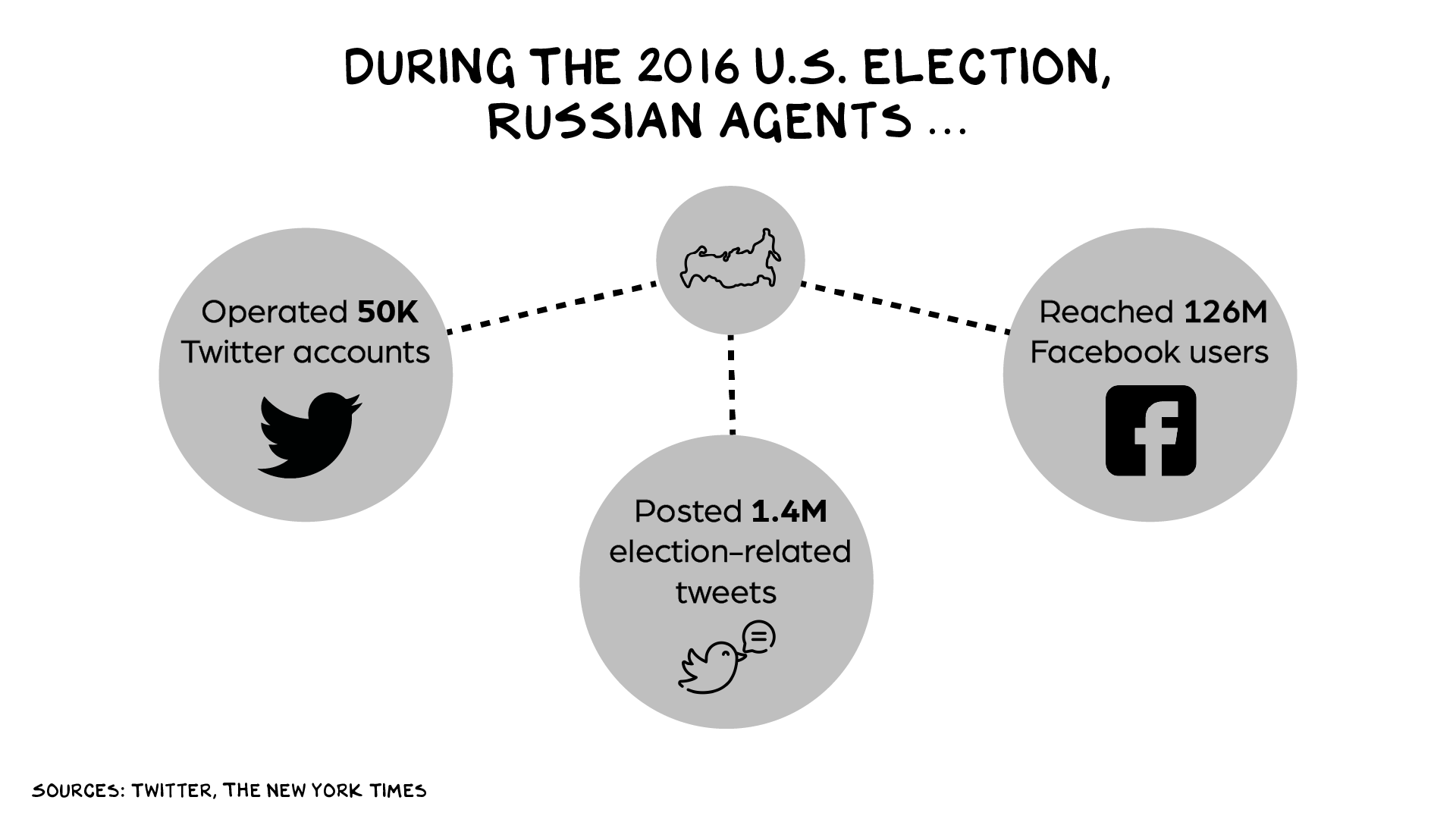

The first AI-driven externality of tectonic proportions will be the disinformation apocalypse leading up to the 2024 presidential election, and in other NATO country elections, as the war in Ukraine continues. Vladmir Putin’s grip strength is weakening: His historic miscalculation in Ukraine has left him just one get-out-of-jail-free card: Trump’s reelection. The former president has made it clear he would force Ukraine to bend the knee to Putin if he gets back in the White House.

Putin has generative AI at his disposal. Expect his Fancy Bear operation to compile lists of every pro-Biden voice on the internet and undermine their credibility with smears and whisper campaigns. Also, a barrage of deepfake videos of Biden falling down, mumbling, and generally looking like someone who’s (wait for it) going to be 86 the final year of his term. Side note: President Biden will go down as one of the great presidents, and it’s ridiculous he’s running again. Yes, I’m ageist … so is biology. But I digress. Anyway, expect information-space chaos.

There’s more. AI has shortcomings and limitations that are causing human harm and economic costs already. Both the catastrophists and the solutionists want you to focus on sentient killer robots, because the actual AI in your phone and at your bank has shortcomings they’d rather not be blamed for.

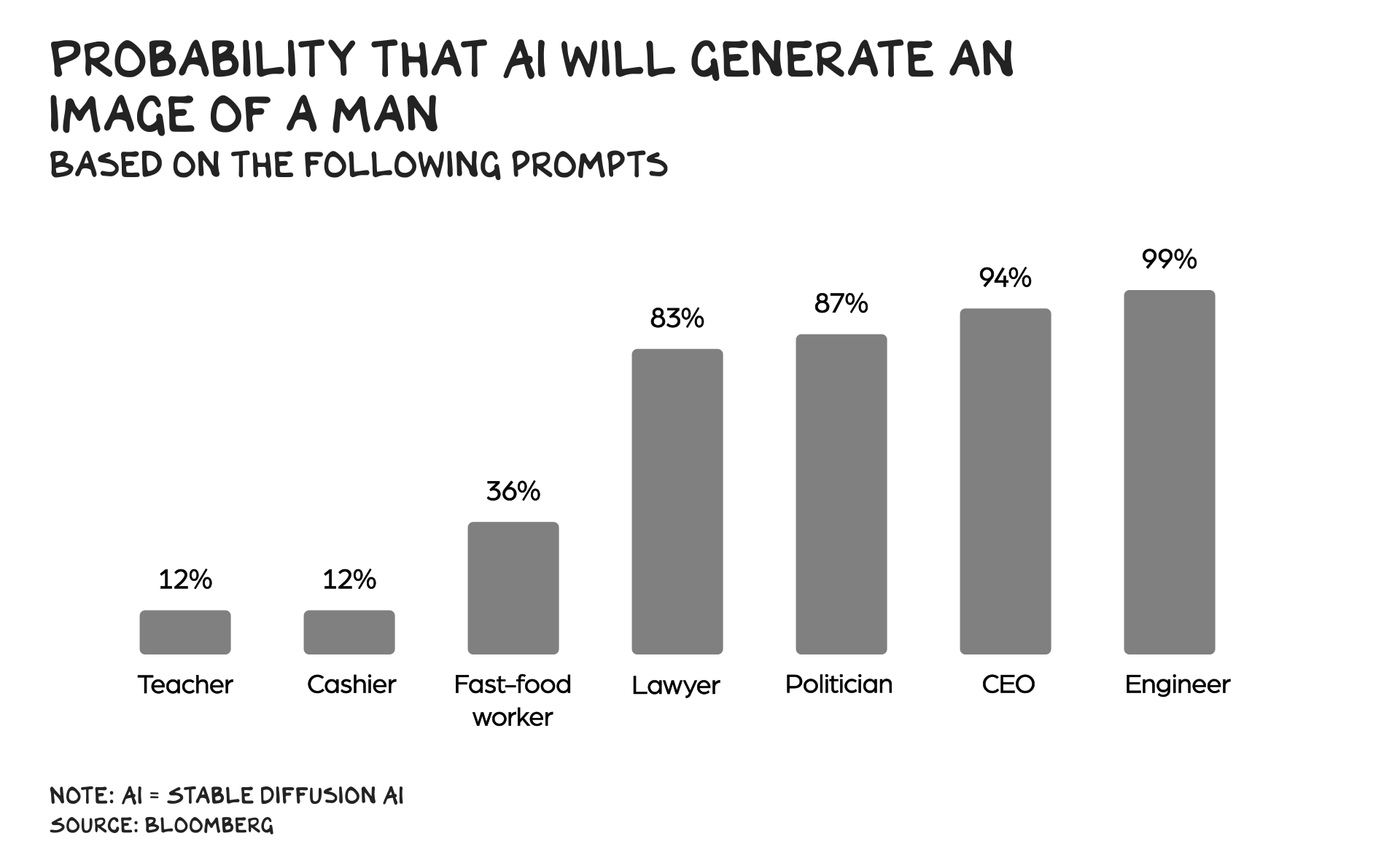

AI systems are already responsible for a Keystone Cops record of blunders. Starting with the cops themselves — the deployment of AI to direct policing and incarceration has been shown to perpetuate and deepen racial and economic inequities. Other examples seem lighthearted, and then insidious. For example, a commercially available résumé-screening AI places the greatest weight on two candidate characteristics: “the name Jared, and whether they played high school lacrosse.” These systems are nearly impenetrable (it required an audit to suss out the model’s obsession with Jared) — who knows what names or hobbies the AI was putting in the “no” pile? Amazon scientists spent years trying to develop a custom AI résumé screener but abandoned it when they couldn’t engineer out the system’s inherent bias toward male applicants, an artifact of the male-dominated data set the system had been trained on: Amazon’s own employee base. AI driving directions plot courses into lakes, and systems for assessing skin cancer risk return false positives when there’s a ruler in the photo.

Generative AI systems (e.g. ChatGPT, Midjourney) have problems too. Ask an image-generating AI for a picture of an architect, and you’ll almost certainly get a white man. Ask for “social worker,” and the systems are likely to produce a woman of color. Plus, they make stuff up. Two lawyers recently had to go before a federal judge and apologize for submitting a brief with fake citations they’d unwittingly included after asking ChatGPT to find cases. It’s easy to dismiss such an error as a lazy or foolish mistake, and it was, but we are all sometimes lazy and/or foolish, and technology is supposed to improve our quality of life, vs. nudge us into professional suicide.

Second-Order Effects

Over the long term, productivity enhancements create jobs. In 1760, when Richard Arkwright invented cotton-spinning machinery, there were 2,700 weavers in England and another 5,200 using spinning wheels — 7,900 total. Twenty-seven years later, 320,000 total workers had jobs in the industry, a 4,400% increase.

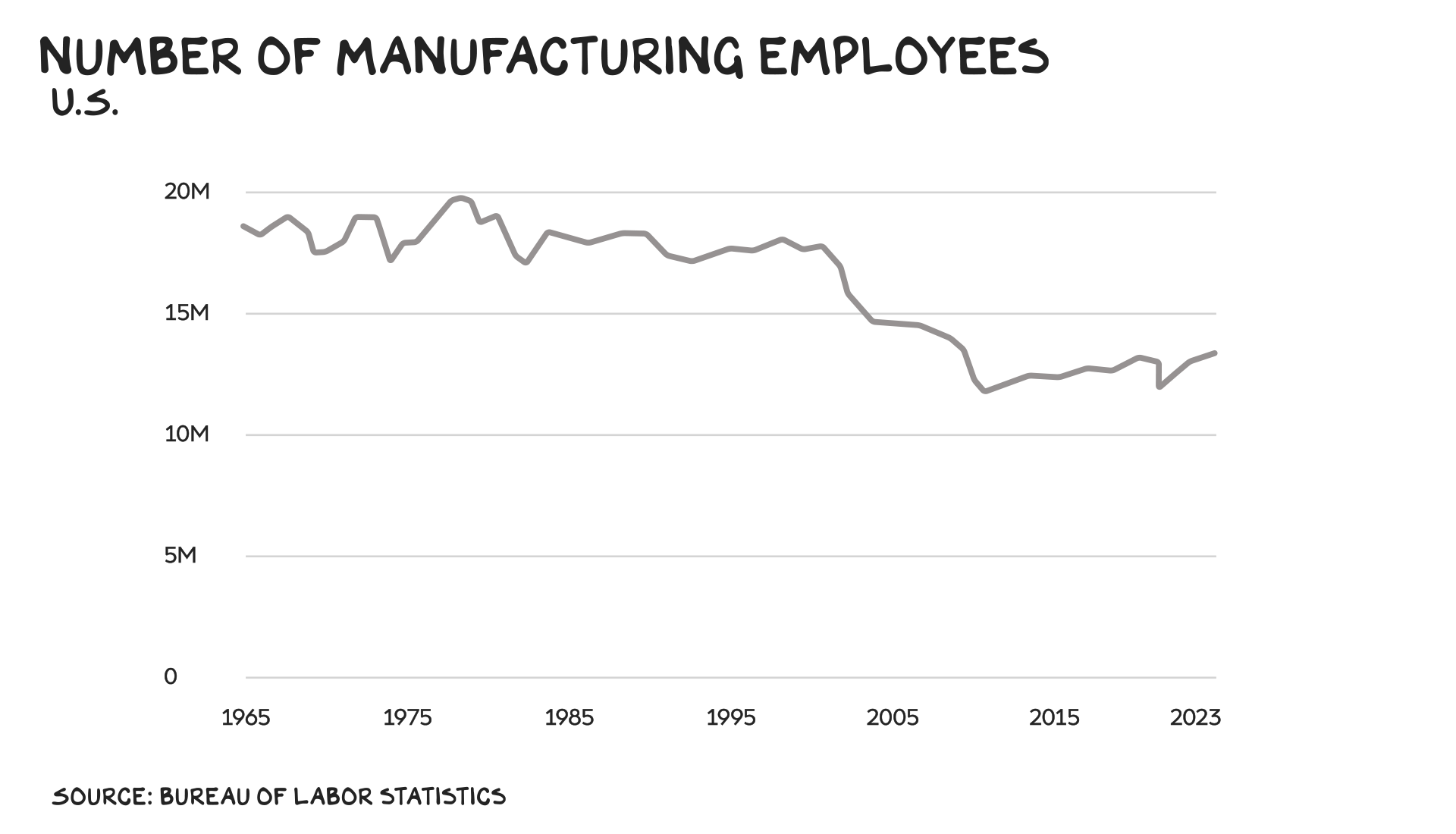

However, the path to job creation is job destruction, sometimes societally disruptive job destruction — those 2,700 hand weavers found themselves out of work and possessing an expired skill set. A world of automated trucks and warehouses is a better world, except for truck drivers and warehouse workers. Automation has already eliminated millions of manufacturing jobs, and it’s projected to get rid of hundreds of millions of other jobs by the end of the decade. If we don’t want to add fuel to the fire of demagoguery and deaths of despair, we need to create safety nets and opportunities for the people whose jobs are displaced by AI. The previous sentence seems obvious, and yet …

My greatest fear about AI, however, is that it is social media 2.0. Meaning it accelerates our echo-chamber partisanship and further segregates us from one another. AI life coaches, friends, and girlfriends are all in the works. (Disclosure: we’re working on an AI Prof G, which means it will likely start raining frogs soon.) The humanization of technology walks hand in hand with the dehumanization of humanity. Less talking, less touching, less mating. Then affix to our faces a one-pound hunk of glass, steel, and semiconductor chips, and you have crossed the chasm to a devolution in the species. Maybe this is supposed to happen, because we’re getting too powerful, and other species are punching back. Shit, I don’t know.

Techno-Realism

In sum, AI is here and generating real value/promise. It’s imperfect and hard to get right. We don’t know how to get AI systems to do exactly what we want them to do, and in many cases we don’t understand how they do what they’re doing. Bad actors are ready, willing, and able to leverage AI to speedball their criminal conduct, and many projects with the best intentions will backfire. AI, similar to other innovations, will have a dark side. What’s true of kids is true of tech: bigger kids, bigger problems. And this kid, at 2 years old, is 7 feet tall.

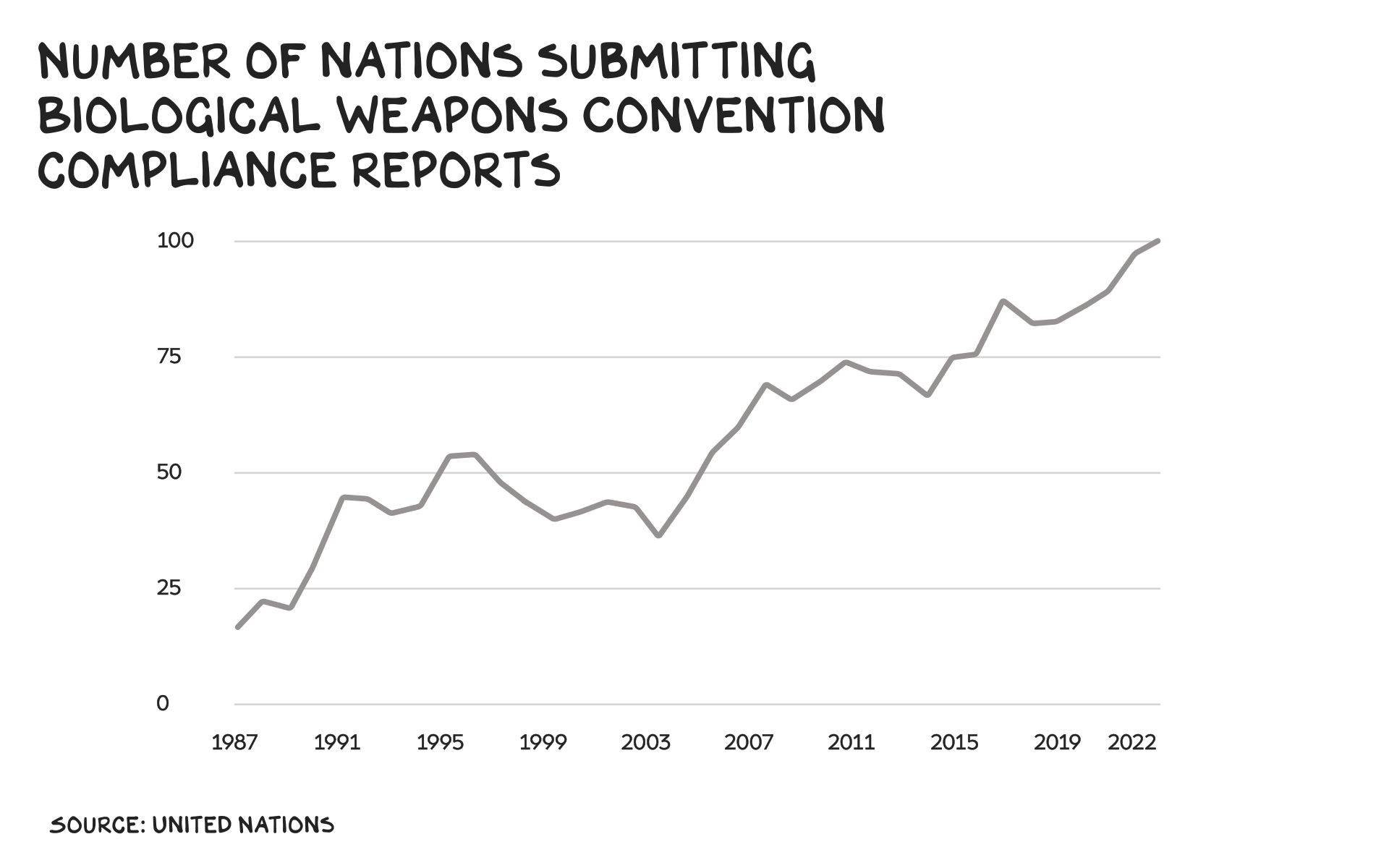

The good news, I believe, is that we can do this. We haven’t had a nuclear detonation against a human target in 80 years, not because the fear of nuclear holocaust created a “moral panic,” but because we did the work. World leaders reached across geographic and societal divides to shape agreements that defused tensions and risks when nukes and bioweapons became feasible — in no small part thanks to huge public pressure organized and pushed forward by activists.

The Strategic Arms Reduction Treaty that de-risked U.S.-Russian conflict throughout the ’90s wasn’t just an agreement but an investment that cost half a billion dollars per year. All but four of the world’s nations have signed the Treaty on the Non-Proliferation of Nuclear Weapons. Blinding laser weapons, though devastatingly effective, are banned. So are mustard gas, bacteriological weapons, and cluster bombs. Our species’ superpower (cooperation) isn’t focused only on weapons. In the 1970s the world spent $300 million ($1.1 billion today) to successfully eradicate smallpox, which killed millions over thousands of years. Across domains, we come together to solve hard problems when we have the requisite vision and will.

Technology changes our lives, but typically not in the ways we anticipate. The future isn’t going to look like Terminator or The Jetsons. Real outcomes are never the extremes of the futurists. They are messy, multifaceted, and, by the time they work their way through the digestive tract of society, more boring than anticipated. And our efforts to get the best from technology and reduce its harms are not clean or simple. We aren’t going to wish a regulatory body into existence, nor should we trust seemingly earnest tech leaders to get this one right. I believe that the threat of AI does not come from the AI itself, but that amoral tech executives and befuddled elected leaders are unable to create incentives and deterrents that further the well-being of society. We don’t need an AI pause, we need better business models … and more perp walks.

Life is so rich,

P.S. This week on the Prof G Pod, I was joined by Ian Bremmer, president and founder of Eurasia Group. We talked China, U.S. diplomacy, and Ukraine’s counteroffensive — listen here.

P.P.S. If you’re wondering how you should be using AI at work, join our new Generative AI Business Strategy workshop on July 27 at 12 p.m. ET. Sign up here.

AI untaps the potential and Deep mind is taking AI intelligence with quantum computing to exceed humans intelligence to keep up with the rate of change

Great article, however I would argue that the lack of nuclear detonations against human targets owes rather more to dumb luck than any successful planning and commitment. I suggest you read the doomsday machine by recently deceased Daniel Ellsberg for references.

“We have plans to build a railroad from the Pacific all the way across the Indian Ocean.” Joe Biden

Joe Biden will go down as one of the great presidents – Scott Galloway

Great spin on the state of the current technology as a savior or devil – A.I. . I think we are slowly working our way to driving all to insanity/self -destruction. Technology is inherently amoral – no soul. It will be like predator in the wild – will consume what it needs to stay alive….

I think it’s your Wit and your relentless focus on “Does This Actually Do Something that Human Beings Always Wanted to Do” …. as much as your “Insights on Technology” that periodically/frequently? makes me “Smile Out Loud.” Thank you for inserting a little Wit & Wisdom into the Inexorable march of self congratulatory celebration of the “Wonders of Technology.” You remind us that it really doesn’t matter how “Like Totally Awesome” a piece of Tech might be if it doesn’t help human beings to do something they wanted to do, needed to do, or at the very least … found out pretty quickly that “they had ALWAYS WANTED TO BE ABLE TO DO THAT ……. and just didn’t know so.

Prof. G, am an avid follower of your newsletter and your books. That said, if you are going to stray from business / tech into political commentary, I think your incisive intellect should be equally directed to both sides of the increasingly polarized situation in the US. If the blatant govt collusion with media in censoring free speech is not worthy of your notice, then certainly Biden’s age isn’t worth comment. If the FBI running scam investigations for political purposes isn’t alarming to you (hello, McCarthy?) you are being selective in what you notice. Voices like yours are important, so if you add political commentary, let’s have some balance. cheers

@johncrane: thanks for a perfect example of straw man arguments (1) If the blatant govt collusion with media in censoring free speech…: this never happened. (2) If the FBI running scam investigations for political purposes…: this never happened. If you can’t use real facts, best not to enter the discussion.

And our efforts to get the best from technology and reduce its harms are not clean or simple. We aren’t going to wish a regulatory body into existence, nor should we trust seemingly earnest tech leaders to get this one right. I believe that the threat of AI does not come from the AI itself, but that amoral tech executives and befuddled elected leaders are unable to create incentives and deterrents that further the well-being of society. We don’t need an AI pause, we need better business models … and more perp walks.

Cancel the above … what I would have said would be “Too Long” & one of the things I so admire about your writing … is the brevity.

When I read your articles drunk they have a nice flow to them. Every sentence contains a witty dunk. Christ, are you writing them under the influence … or do you have a writers’ room that comes up with all the witty punch lines ?! I’m genuinely curious and would like to know how you do it ? Can’t be AI or it would be a mind-numbing trope… Anyway, I enjoy your content and it makes me contemplate about things. Mission accomplished ! Go LFC !

U.S. recent history is an indication of future outcomes; AI will hasten the decay of this empire.

The decline is accelerating anyway, but AI will certainly give a push.

Like the article and the various examples except the weavers versus industrial spinning machines – I suspect (although don’t know for sure) that the weavers earned a hell of a lot more than the poor sods working on the giant looming machines. At school in the UK (in the 1970s anyway) we were taught about how kids died in those machines. I suspect what happened was that 100,000s of jobs were created but that they were very low paid, menial jobs and the wealth was transferred from thousands of craftspeople to a few wealthy, well-born industrialists. I’m not against innovation or progress but ther reference felt counter to many other themes you raise Prof – on wealth inequity and the social costs of unregulated technology. Anyway, just sayin’. Might be that I’m just more pedantic on a Sunday

Perhaps more to the point, while there were 320k textiles jobs in England in 1787, compared with 7.9k in 1760, those “created” jobs displaced a lot of workers outside of England. I couldn’t guess whether there was a global gain, but these numbers are surely misleading.

It’s clear that employment overall has not suffered; higher productivity has certainly provided people with the disposable income needed to consume other stuff, and that has led to employment gains in new industries.

Very true. I grew up in Lancashire. There if you wanted to talk about havig a long weekend it was called a ‘weaver’s weekend’, which harked back to the late 18/early 19th century when weavers, working at home on hand looms could earn a good living in 3 days. Once weaving was industrialised it took 7 days to make a bad living.

You lost me when you said Biden would go down as one of the great presidents … what?!

Same here. What have you been smoking?

Heard Gary Vaynerchuk comment at Tribeca X that blockchain is our best defense against deepfakes. Intriguing hot take on how “controversial” emerging tech can help us handle the misuse of AI.

The difference between AI and nuclear and biological weapons is that AI will be in regular use while extinction event weapons only weapons. It will be much harder to regulate AI until we meet our HAL-900 “Dave, I can’t do that” moment.

Always appreciate your perspective professor G! This was the pearl for me today…

“ The humanization of technology walks hand in hand with the dehumanization of humanity.”

Be well.

I’m old enough that I’ve now seen a long parade of hot new tech advances that were going to change everything. The techno geeks and Wall Street shills who want to position themselves to profit from AI brush over the fact that its ultimate success or failure will depend largely on how the general population accepts it. It’s quite possible that, like other tech advances, it will be used in certain applications, but won’t work for any others of importance. Thanks for one of the few balanced articles on this topic that I’ve seen to date.

320,000 jobs shifted from all around the world to Britain.Colonies spinners and weavers lost their jobs.Zero sum game.

Ah, yes, Licensing schemes…that become ‘ too big to fail’- worked well for the British East India Company- until they needed a bail out or go bankrupt. That bail out lit the match to the American Revolution. To the US government bail out of Banks, too big to fail. What will the AI bail out be?

Great column as usual. Everything about social media and the complicity of the big tech platforms in causing teen depression, misinformation, etc. are right on. Today, if we are still on Facebook or Twitter (or any of the other major social media platforms) then we are part of the problem. We should all delete our accounts.There is no other way to reform these tech platforms.

“President Biden will go down as one of the great presidents”

Funniest line I have ever seen written.

‘Biden will go down as one of the great Presidents’

I assume the statement in the article is satire or the possible output of a REALLY deep fake video.

Joe is corrupt, his family corrupt. If daughter to be believed, an abuser of children. He lies regularly ‘my son died in Iraq’ or can be considered delusional ‘build a railroad from Pacific over the Indian Ocean’

On the other hand, AI generated commercials on cable news can fix those flaws in time for the 2024 election. Maybe a version of Joe that can actually debate RFK, Jr and not be destroyed.

For all of the party’s faults, GOP can at least have a real debate with real people unaided by AI. Assuming the voting public wants real questions from real journalists. That could be a wrong assumption….

What did I ever do to deserve to receive this column? I love every one.

Perfect. In the 1970s I dated a Stanford Computer Science grad student. He was okay. His friends were bigots—endless Polish jokes, a derisive picture of items used by members of my faith group, mysogeny, Stanford chauvinism and worst of all, lack of respect for my graduate program, MBA from Berkeley Business School (now Haas)!

I don’t trust their successors to create an equitable world.

Awesome as usual…very much agree that we need better business models. Nothing happens without the incentives designed specifically to create desired outcomes. We need to figure out how to create a fire hose of on the ground human truths to dynamically inform/train generative AI over time. I have posted some thoughts on LinkedIN. Would welcome yours.

Excellent article, as always.

Just to clarify, Luddites, we were no way against technology, but rather the use of technology to suppress wages… They objected to the business owners, capturing all of the additional profits from automation and not sharing any of the benefits or profits with the workers, while suppressing their wages and producing inferior goods…

From: “The Writings of Luddites:”

“a fraudulent and deceitful manner” to get around standard labor practices. “They just wanted machines that made high-quality goods,” says Binfield, “and they wanted these machines to be run by workers who had gone through an apprenticeship and got paid decent wages. Those were their only concerns.”

Google: “what-the-luddites-really-fought-against-264412/“ for more….

So many words for a few simple points.

When you have an industry that uses psychological warfare on its own civilians don’t be so quick to release comments indicating you understand the mindset of anybody else that’s going through something in this life. With all due respect if I know you as you are then obviously my knowledge supersedes any of yours. So when you say somebody believes they are Jesus be careful because when you use Jesus as a way of belittling someone who understands Jesus God will find a way to strike you that’s blasphemy don’t be upset with someone else’s knowledge so much so to leave comments because you hold a psychology degree this is coming somebody who’s going through this experience respectfully no disrespect to you personally but professionally and psychologically that’s almost like gas lighting gee how would I know that stay safe and stay sucker Free everyone psychology is nothing to be played with especially when dealing with children that don’t belong to you that ultimately girl into being a 30-year-old who thinks they are Jesus have a great day

Ever heard of punctuation? This comment reads as stream of unconsciousness.

Never deny that Scott Galloway is our Christ and Savior.