AI

AI

Audio Recording by George Hahn

Lately tech has been about failures. How technology has failed us. The more sober assessment is that humans are failing tech. Social media may be ruining childhood, and crypto has immolated billions of dollars. But it’s grown-up children that are ruining social media and pumping crypto. The problem? Tech has moved to the front of the house when it’s meant to play a supporting role. Answers in search of questions.

Each year brings a new new thing in tech: wearables, mobile, 3D printing, blockchain, Web 3.0, the metaverse. Some live up to the hype, most do not, and a few exceed it. So … what is the tech of 2023? And more important, will it support societal improvement, or merely transfer more capital to the fast movers and the already wealthy? Artificial intelligence. I believe we’re in the midst of a great leap forward in AI, and that this tech will be transformative, not just lucrative, thanks to its utility.

Just in the past few months we’ve seen progress in AI capabilities that has left even skeptics impressed. A “golden decade” one researcher called it. A scientist at the Max Planck Institute said, “This will change medicine. It will change research. It will change bioengineering. It will change everything.” This year’s State of AI report shows a hundred slides of accelerating progress. In 2020 there wasn’t a single drug in clinical trials that had been developed using an AI-first approach. Today there are 18. An AI system developed by vaccine pioneer BioNTech successfully identified numerous high-risk Covid variants months before the WHO’s tracking system flagged them. Ukraine has used AI to reduce the time needed to order an artillery strike from 20 minutes to less than 60 seconds.

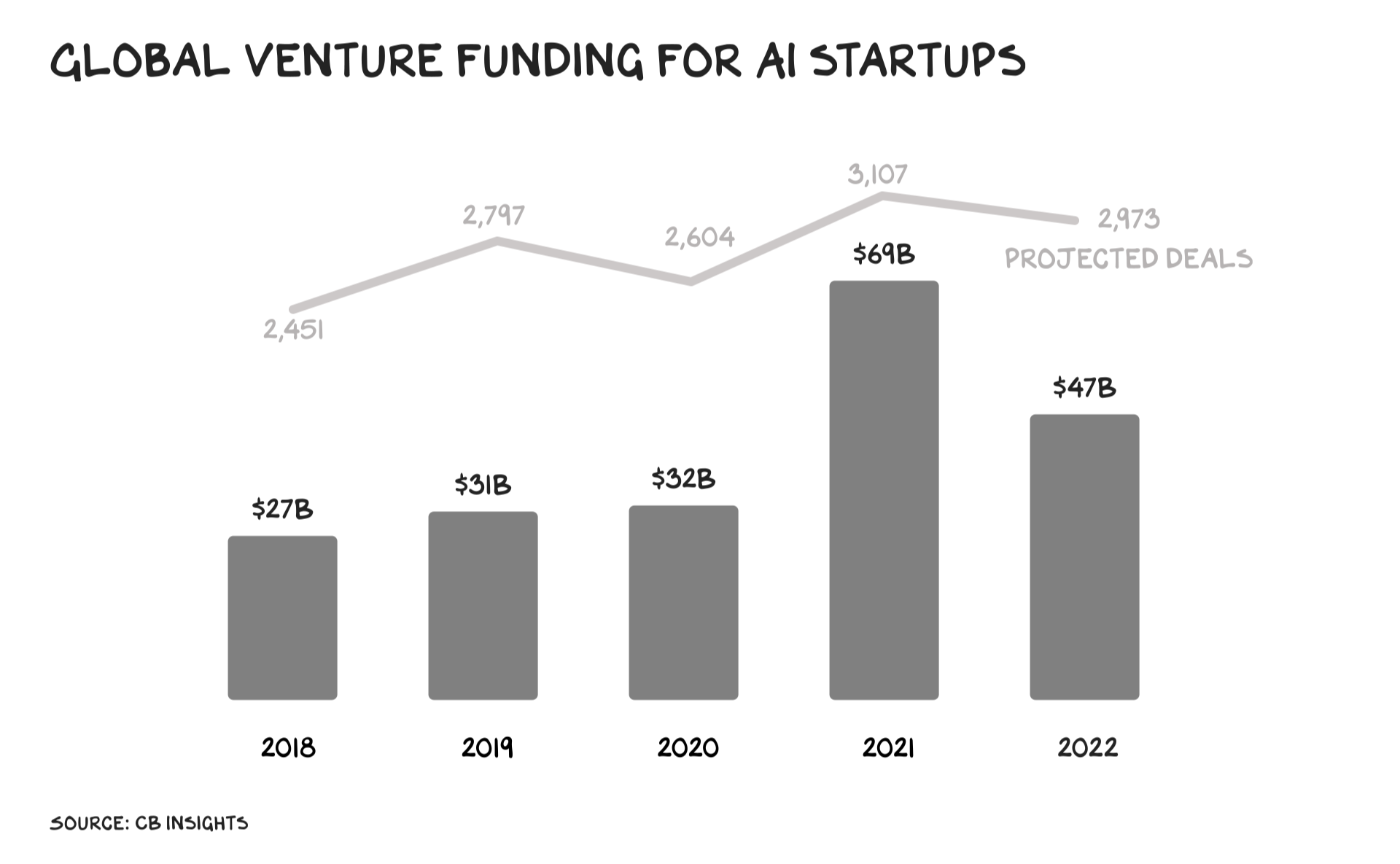

Investment has followed. Over $100 billion has been invested in AI startups since 2020, and funding doubled in 2021. There are 102 AI unicorns in the US and 38 in Asia.

Category vs. Intensity

AI is not strictly defined, but (roughly) it’s a computer system that makes decisions in an independent and flexible way — distinguished from other computational processes by the category of problems its solves vs. their intensity. A calculator processes numbers with speed and precision no human can duplicate, but it produces rote, unvarying results based entirely on its own internal logic. It’s not “intelligent.” The system on my phone offers three options for each word I type; it is “intelligent,” because it’s making complex decisions based on evolving inputs. Computers often do things humans cannot. What distinguishes AI is that it does things humans can do, but usually better, faster, and cheaper.

AI has been in pop culture for generations. (“I’m sorry, Dave, I can’t let you do that.”) And it’s quietly been present in our lives for almost as long. Some places AI is having an impact today include: 1) Navigation apps: Plotting the best route across town, assessing different roads and conditions, and offering an eerily accurate ETA. 2) Fraud detection: AI systems can catch fraudulent charges before you know you’ve lost the card and deny them. 3) Social media: TikTok’s ability to discern our preferences and addict our children (and parents) is AI feasting on swipes, likes, and scrolls (i.e., data). While Tim Cook kneecapped the Zuck with the ability to opt out of tracking, AI has been the workaround for the Chinese app. The weapon of choice in the war between Big Tech players is AI.

AI’s contributions to transportation, finance, and media already dwarf any value recognized from crypto. Fun fact: There are few AI companies domiciled in the Bahamas. AI will generate continued progress across disciplines, just as the internet and PCs do. That’s newsworthy, but not what’s motivating billions in investment.

What’s different about AI is that it solves real-world problems, specifically problems that are … fuzzy. These have proven stubbornly resistant to traditional computing. Fuzzy problems are all around us; communication is rife with them. Speech recognition, for example, was stagnant for decades. AI is pushing it forward; we are closing in on substantive natural language communication (e.g., Siri will suck less and Alexa will be even better). Content moderation is a fuzzy problem, and difficult for humans. Investing, driving, and a lot of semi-skilled tasks are also fuzzy. Robotics is a set of interconnected fuzzy problems. The majority of life’s challenges and questions have no one right answer, but augur questions (additional data capture) that gets us to better answers.

AI recognizes patterns and evaluates options. Which Twitter post keeps me engaged, what price on Amazon inspires me to hit the buy button, and whether or not I meant to leave my AirPods at home. The past few months have witnessed an explosion of “generative AI” — systems that create new options.

Look What I Made

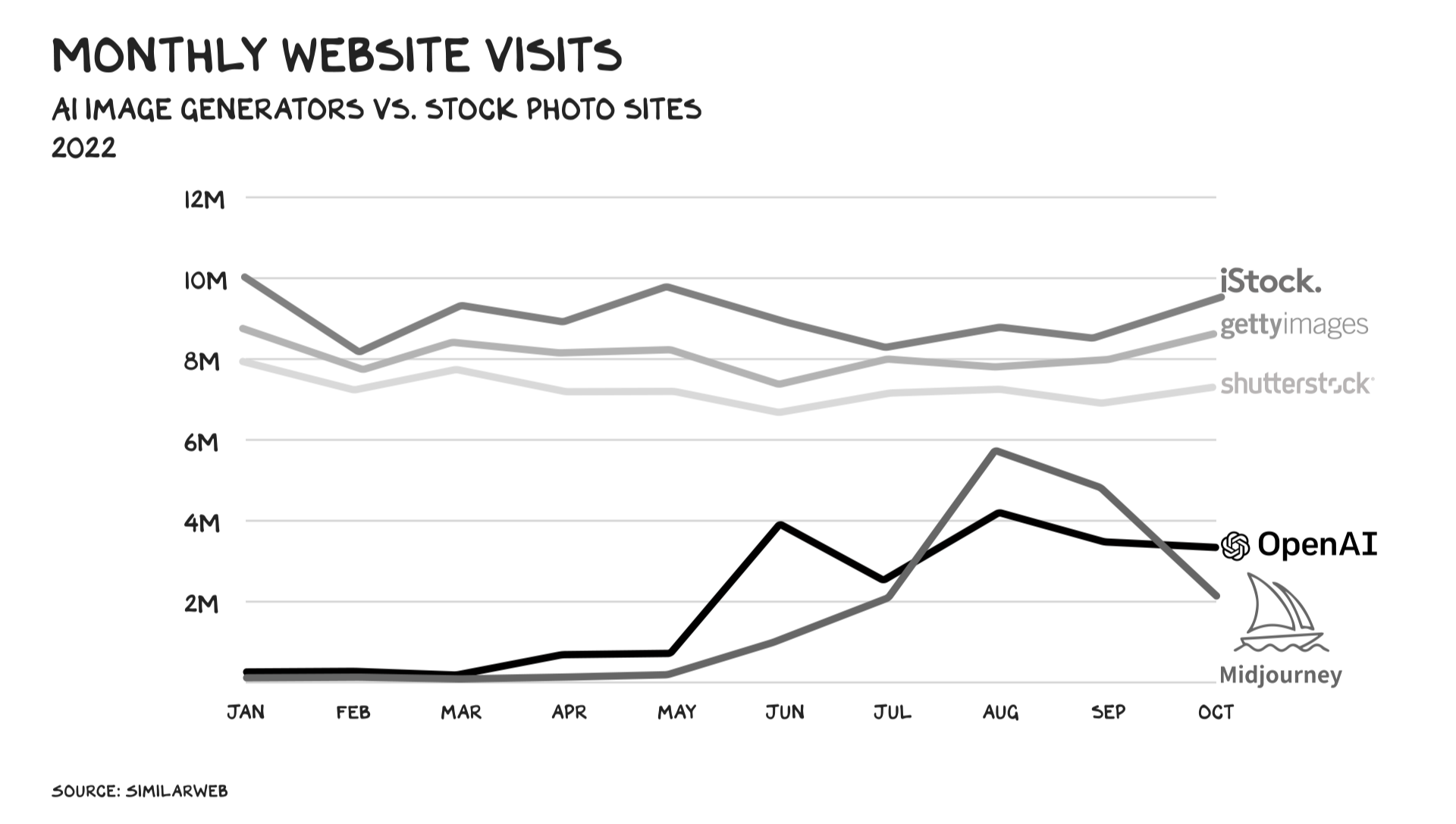

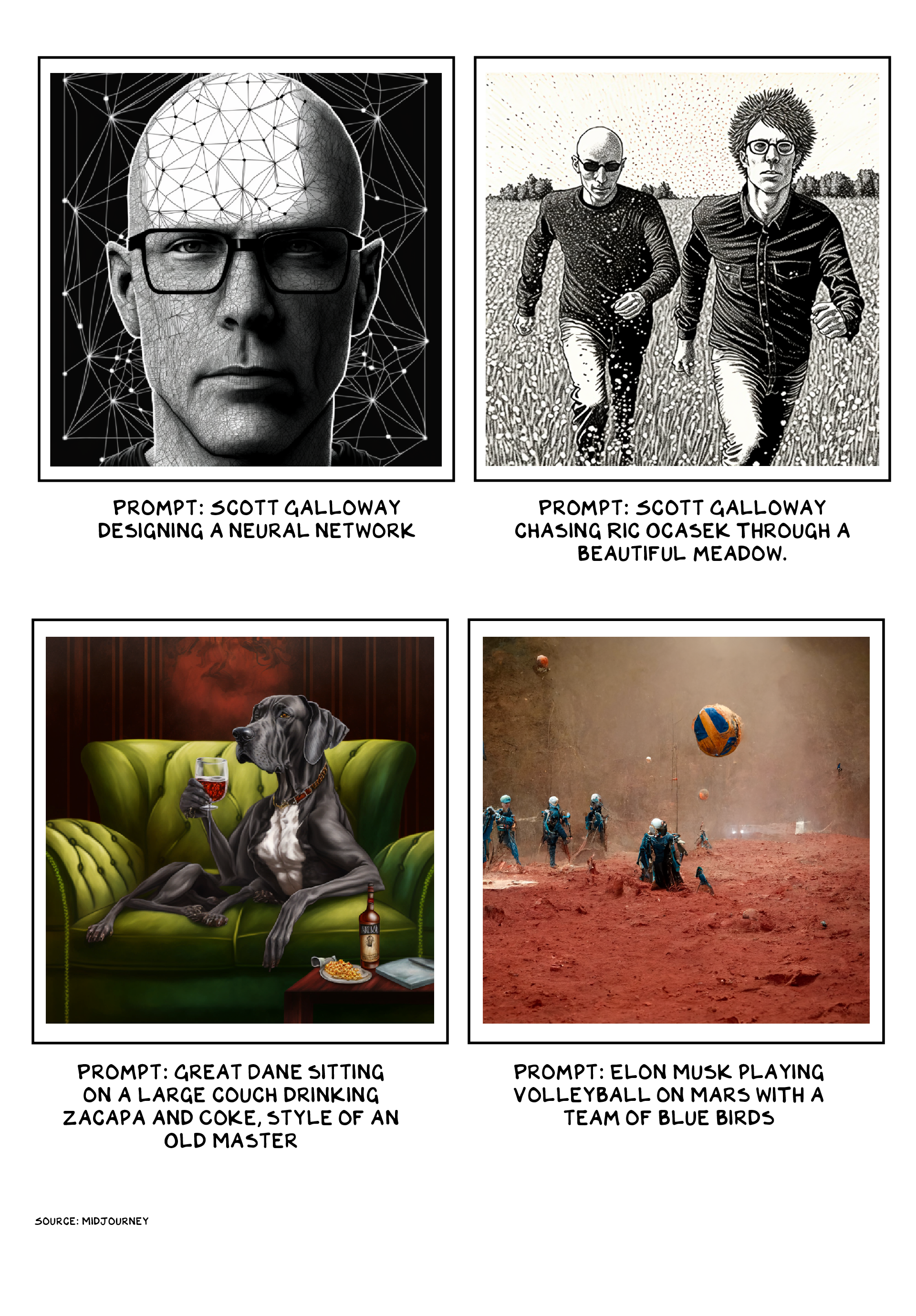

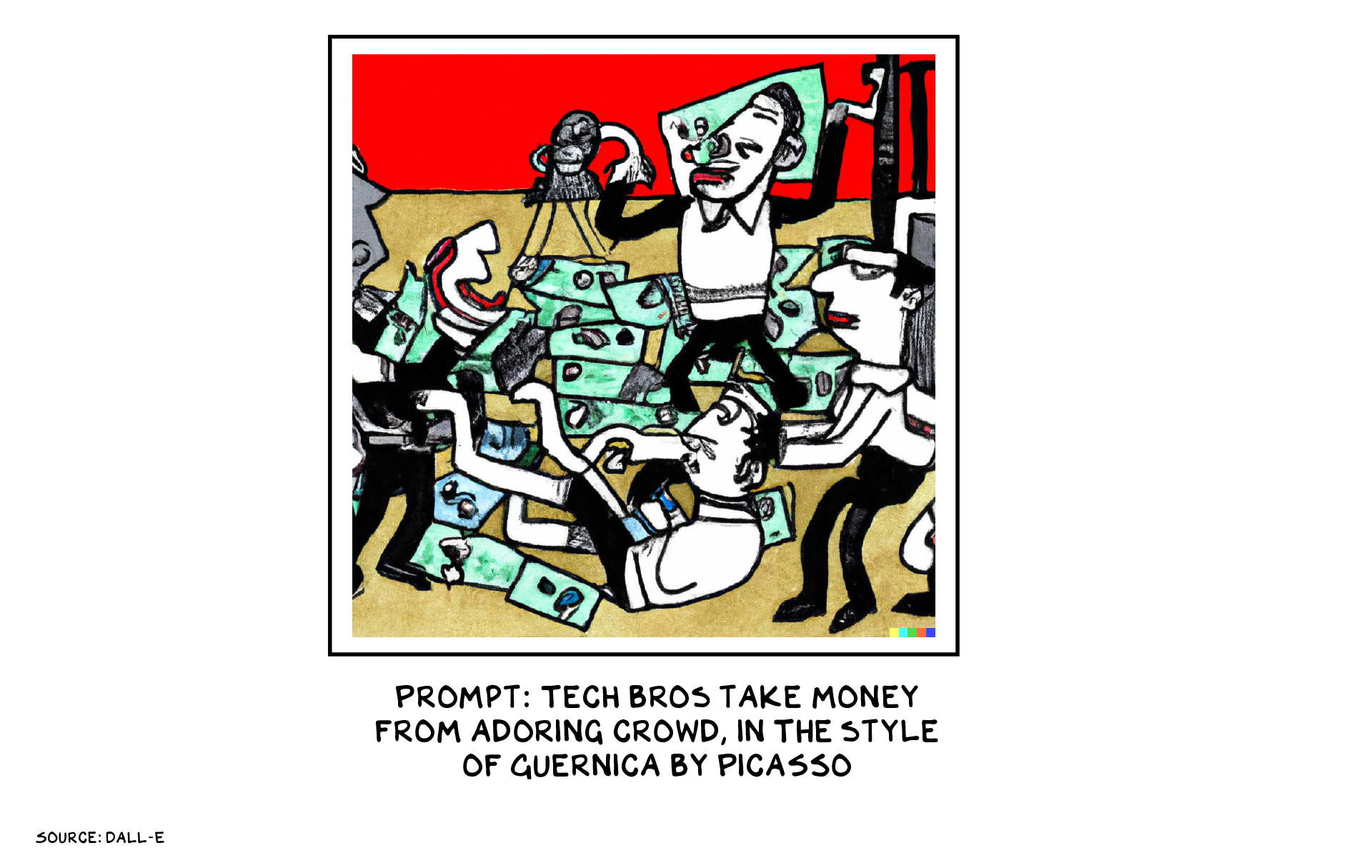

Within a few months of one another, three different image-generating AIs were made public. Dall-E, Midjourney, and Stable Diffusion are variations on a theme. You enter text, and in a few seconds the system generates an image. Ask for “a portrait of an alien with Kodak Professional Portra 400 film stock, shot by Annie Leibovitz” or “Cookie Monster reacting to his cookie stocks tanking” or “Homer Simpson as a Greek god statue,” and boom. Dall-E was developed by OpenAI, a company originally funded by, among others, Elon Musk. It also received a billion-dollar investment from Microsoft. The app has 1.5 million users producing 2 million images a day. Stability AI closed a $100 million round last month. Midjourney is already turning a profit — one of its images was an Economist cover, and another won a prize at the Colorado state fair. A sign of things to come: They’re approaching the web traffic enjoyed by major stock photo houses.

Coming soon are text-generating AIs. The user enters a text prompt and asks for a type of output (promotional email, blog post, FAQ) and the system spits it out. Jasper, an AI writing tool, recently closed a $125 million funding round. Microsoft’s GitHub CoPilot, which has been in the field for a year, offers programmers suggestions as they code, a more robust version of Google Docs’ type-ahead recommendation feature. GitHub claims that once coders enable CoPilot, they let it write 40% of their code. Look for a profusion of “CoPilot for ___” apps coming soon. These systems work best in structured environments such as coding. They struggle in more freeform areas such as humor. Alan Turing suggested this test for artificial intelligence: Could it convince us it was human? But a better test may be if it can make us laugh. See above: Fuzzy.

These systems could supplement or replace human creators in many sectors. They can be powerful tools for ideation, generating many variations on a theme, particularly something that can be subsequently tweaked and improved by a human artist. Designing logos and writing tag lines, for example. AI is already being used by some media outlets to generate passable stories on topics including local sports, where there is ample data from which the system can create text. (“The Giants led at halftime, only to fall behind by 14 when the Mustangs scored two touchdowns in the third quarter.”) Video generation is under development, and with it, customized media. (“Siri, show me a 110-minute movie about a handsome bald man who overcomes great odds to make his fortune as the world’s greatest bartender, in the style of Stanley Kubrick.”)

The profusion in generative AI systems stems from a technical breakthrough made a few years ago by researchers at Alphabet’s Google Brain research group. These systems are “neural networks,” software that “trains” by reviewing examples — poems, recipes, faces, songs — that build an internal map of references. (It does not store the examples themselves in a library, just as our brains don’t store images of things we remember.) Once a system has reviewed a few million faces, it can reliably distinguish a fresh face from a face it has seen before, or, in the most advanced form, produce an entirely new face.

The Google breakthrough is called a “transformer” — it’s better than previous systems at seeing the whole picture (or text, or DNA strand, etc.). Computers, even advanced AIs, like to proceed step by step, and aren’t good with context. This is why a lot of AI-generated art looks like a bad Picasso, with noses coming out of the side of heads and people with no arms and too many legs. The system knows a face has a nose, but it’s not good at keeping the nose in the right place relative to the eyes and mouth. The transformer design uses clever tricks so the system can “think” about noses and eyes and mouths at the same time. Once transformer neural networks came online, improvements came fast. These two images were generated just a few months apart — the images are not perfect, but improving.

Hype Cycle

Froth accompanies turbulence, and with the increased pace of AI, the hype machine is kicking into gear. Generative AI images are the Bored Apes of AI (which is a thing), and no doubt there will be AI scams, or people with large social media followings pumping and dumping AI-centered investments, tokens, projects, etc. The comparison with crypto is worthwhile. Partly as a cautionary tale, but also for contrast.

Crypto was supposed to change the world. Before sinking into Bahamian sand, FTX likened itself to the invention of: the wheel, the toilet, coffee, the Constitution, the lightbulb, the dishwasher, space travel, and the MP3 player. Crypto is half of a revolutionary technology. The promise half: digital money that removes intermediaries and trust. Created by an entity known only as Satoshi Nakomoto and running on something called a blockchain opaque enough to appear to have massive promise. Unrealized potential. All of which attracted capital. More capital meant more storytellers, more storytellers meant more promise, more promise meant more capital, and the wheel turned.

To date, the new wheel is a highly levered Ponzi scheme, because crypto is missing the other half of any enduring technological innovation: utility. We know AI has utility. It’s powering our search engines, medical research, and fraud detection. Judge a technical revolution by its utility, vs. hype. The year 2022 is not the crypto equivalent of 2000 for the internet, as in 2000 most of us were using the internet.

Similar to any tech that scales, or any mechanism that refines one thing into another, creating economic value, AI brings externalities. AIs that evaluate résumés tend to favor White men, because that’s who’s in the sample set. AIs that evaluate crime risk tend to disfavor Black men, for similar reasons. It’s computationally intensive and requires expensive data centers that only governments and the wealthiest companies can afford. But it has a story, and utility. Something 2021 and 2022’s technologies (Web 3.0 and the metaverse) did not offer.

Predicting the Future

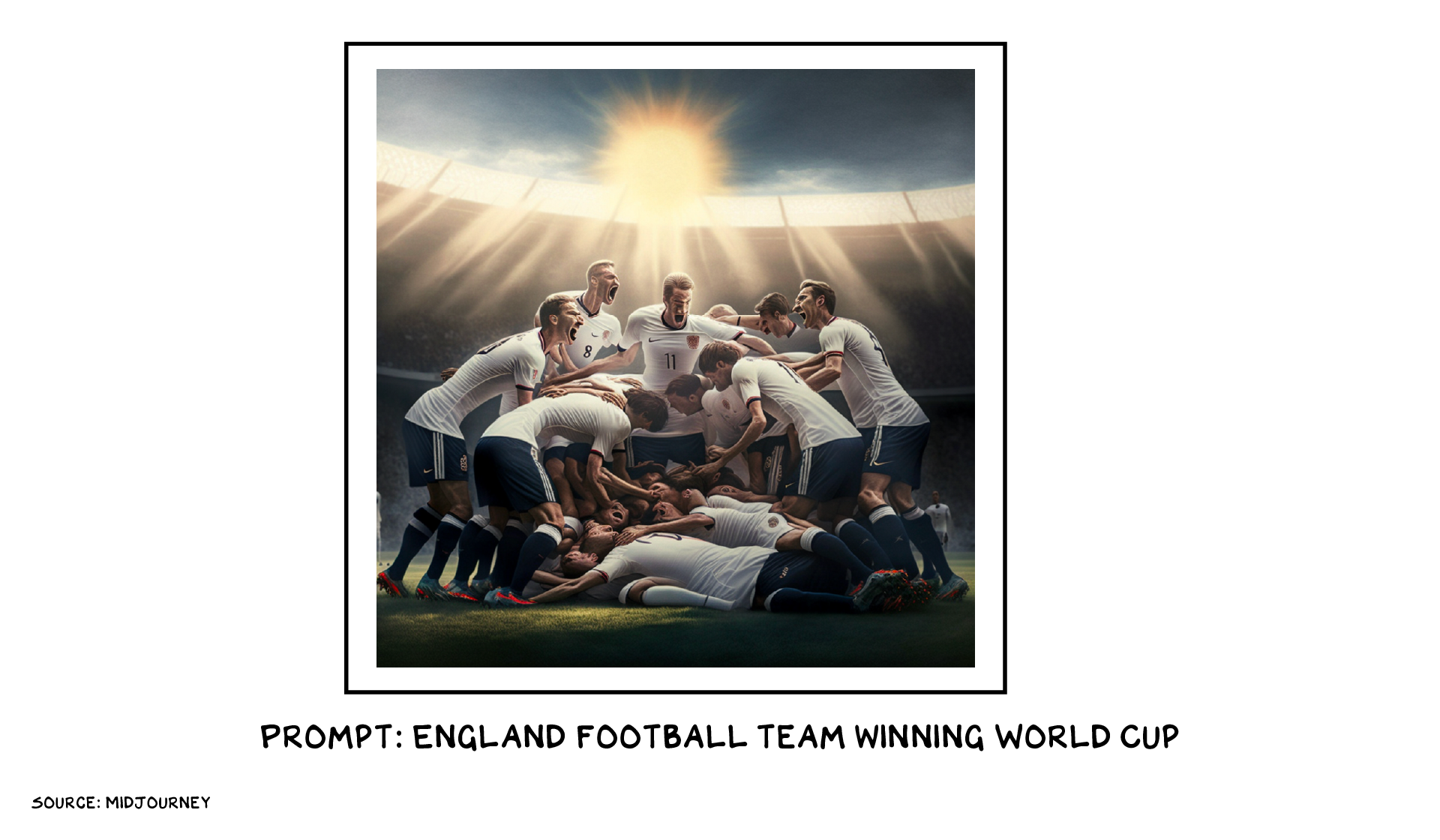

AI still can’t predict the markets, much less the future. The best method, still, of predicting the future is to make it. In three weeks I will travel to Doha with two rabid fans (my 12- and 15-year-old sons) who will demonstrate enormous vocal and emotional support for a team so as to pull the future forward and witness the following:

Similar to Hal 9000, I’d like to believe I can dream.

Life is so rich,

P.S. Making predictions is a shitty business – yet I persevere. Predictions 2023 is happening on December 7. Sign up here.

Well AI may well help the top 10-20% but no solutions how to give the bottom 80% a meaningful life or a livable planet. As everything continues to be monetized, those with no money have no place in a new world.

Scott, how to trust your forecasts after the article about the war with Ukraine? Ha-ha. And at the World Cup we wish England victory!

Can see millions more jobs degraded or disappearing as AI advances…I teach writing at a uni….’write me an essay in the style of…..’ Yeah, great….

Why is Scott supporting England in the World Cup? I know Scotland, his heritage, is not available but the US (his home country) or Wales (non-England in the UK) seemed like better options.

Scott – it kills me every time I read that you are corrupting my rum with Coca-Cola. It should be touched by nothing more than an ice cube and a squeeze of a lime wedge. Stop the madness!

First of all, congratulations on the looks of the charts, they are very clear. Are they done with Python/R or through Adobe InDesign? What is the name of the font?

surely as of Scottish heritage you cant support England!

I can’t not say it, did we not learn anything from Terminator?

AI is a great tool but requires human intervention. The recent KFC nightmare in Germany will give most brands pause before turning over Marketing to AI. The pain caused by this debacle and the resulting PR disaster isn’t worth any cost savings that AI provided.

We should listen to nature not to tech. But man thinks that he is smarter and can rule everything. Not true.

Interesting that your article points out that AI favors White men over Black men and immediately after, you post an AI-generated image of England winning the world cup in which none of the men are obviously Black (which is not at all reflective of the squad). Interesting.

Scott,

Why, if “utility” is so critical to successful technology, does this article focus the most trivial, adolescent video generation prompts?

And as for the family trip to Doha, when it comes to the corrupting effects of income inequality, you talk a good game but you don’t walk the walk. Attending the Qatar World Cup is an admission that a) you have too much money; b) you have powerful connections in the wrong places; c) being entertained is more important than being ethical. Soccer is indeed a great game, but World Cup Soccer is and has been for a very long time irredeemably corrupt. https://www.nytimes.com/2022/11/19/sports/soccer/world-cup-qatar-2022.html

Anyway, have fun!

Didn’t Google quietly retire the AI they were using to evaluate startup ideas for investment? I read something about it but now I can’t find the reference.

Doha. Oil blood money for futball

Yet save all the HATE for golfers.

The best part of this was you calling investing a semi-skilled task.

Dear Scott, as always a pleasure to read your thoughts.

With respect to Predicting the Future, I am not going to Doha but I am an experienced uruguayan footballer, economist and statistician, that with my Natural Intelligence (NI) +Some Algorithms+ Principal Component Analysis can predict with 95% confidence that England may be in the final if can win to France in the round of 4 and to Uruguay in the semi final. In that case, England will play against Brasil or Argentina, and in both cases has around 40% of probabilities of winning the final vs. 60% of it rival. From the beginning of the World Cup, the all around probability of England to win the Cup is aprox. 15%, Brazil 20%, Argentina 18%, France 16%, Germany 11%, and Spain, Belgium, Portugal, Netherlands and Uruguay, 4% each of them. Enjoy the games and we continue in contact after December, 18th. to check these predictions, and after yours of December, 7th, about the coming year 2023. Receive a hug from the South of the Americas. Paul Gersten

Dear Scott, as always a pleasure to read your thoughts.

With respect to Predicting the Future, I am not going to Doha but I am an experienced uruguayan footballer, economist and statistician, that with my Natural Intelligence (NI) +Some Algorithms+ Principal Component Analysis can predict with 95% confidence that England may be in the final if can win to France in the round of 4 and to Uruguay in the semi final. In that case, England will play against Brasil and Argentina, and in both cases has around 40% of probabilities of winning the final vs. 60% of it rival. From the beginning of the World Cup, the all around probability of England to win the Cup is aprox. 15%, Brazil 20%, Argentina 18%, France 16%, Germany 11%, and Spain, Belgium, Portugal, Netherlands and Uruguay, 4% each of them. Enjoy the games and we continue in contact after December, 18th. to check these predictions, after yours of December, 7th. Receive a hug from the South of the Americas. Paul Gersten

Scott is always ahead of the curve. I was at the a big 35,000 attendee conference this week (https://www.iaapa.org/expos/iaapa-expo) and there was a presentation all about MidJourney and image generating AI. So as my grandfather used to say, “there ya go”.

Here are two existential macro-trends for the US and the planet Earth. For the US, more than a half century of growing inequality. For the planet, worsening climate chaos. Both will result in increasing conflict. With climate change, we can expect more drought, famine, floods, fires, disease, and war. Things could change for the better, but for now it looks like we are traveling back to the future: to Herbert Spencer and Thomas Malthus.

Clearly Scott is becoming old and his ego getting more & more in the way. “Tech has moved to the front of the house…” proves you are totally outpaced, outsmarted, … and just babbling data without any profound thinking behind.

“Life is so rich,” your best comment on this post.

I agree with the comment: …They can be powerful tools for ideation, generating many variations on a theme, particularly something that can be subsequently tweaked and improved by a human artist.

We are using Gen AI to create Designs for Product Images using our data set to train the Gen AI, but the images are not useful until our team updates them w/ the actual product and branding. We have that covered and the virtuous cycle continues – more data -> better images.

So adding AI to a process that can enhance the results is something that works very well and creates an ever improving cycle for the Gen AI. Functional results now that are better and more cost effective.

A couple thoughts on AI after a career in tech spanning from the invention of Pong to early 2000s. I did many years on strategy development for tech companies and this is absolutely true: ” Judge a technical revolution by its utility, vs. hype.” Just because something can be done doesn’t give it value. Also AI algorithms are stupid – Personally I rarely need to see more of the same and AI has no way to figure that out. It needs to act like a researcher, less like a sheep. FWIW, I do have visual memories. Thanks for this week.

Hi Scott, why do you say that crypto is missing utility when it is barely in its infancy?

I would refer you to the commentaries, online and in book form, by my friend Gary Marcus, who is incisive about the innate limitations on AI as currently practiced. I can clumsily sum up his critique by saying that AI lacks what we know as “common sense”.

I’m digital stock artist, have been doing that for the last 15 years.I’ve already lost 50% of my income this year, as some stock agencies decided to invest in AI instead of artists. What advice would you give to people that are being automated? There are millions of us, and AI is just getting started. Thanks!

Dear Scott, as always a pleasure to read your thoughts.

With respect to Predicting the Future, I am not going to Doha but I am an experienced uruguayan footballer, economist and statistician, that with my Natural Intelligence (NI) +Some Algorithms+ Principal Component Analysis can predict with 95% confidence that England may be in the final if can win to France in the round of 4 and to Uruguay in the semi final. In that case, England will play against Brasil or Argentina, and in both cases has around 40% of probabilities of winning the final vs. 60% of it rival. From the beginning of the World Cup, the all around probability of England to win the Cup is aprox. 15%, Brazil 20%, Argentina 18%, France 16%, Germany 11%, and Spain, Belgium, Portugal, Netherlands and Uruguay, 4% each of them. Enjoy the games and we continue in contact after December, 18th. to check these predictions, and after yours of the coming year 2023 on December, 7th. Receive a hug from the South of the Americas. Paul Gersten

Why do feel it necessary to post the same thing THREE times in these comments section?

Is this an open question?

Skilled workers of all kinds will have to do what chess grandmasters now do: embrace the machines, use them to produce faster and better work, and edit and manipulate their inputs and outputs to combine our skills with theirs.

Whenever skilled jobs are displaced, the answer is similar. What sets you apart – a visual talent, and a trained hand and eye – give you a head start in controlling and steering the new technology, and assessing and improving its output.

An unacknowledged appropriation from the alternative title for the “Blade Runner” movie. “Do Androids Dream of Electric Sheep?”