Guardrails

Guardrails

Audio Recording by George Hahn

Last week we learned about a significant leak of classified material that exposed key details of the Ukrainian war effort and America’s security apparatus. The perpetrator? Not an extremist group or criminal network, but someone we’re more familiar with. A young man who spends too much time online.

Technology and our inability to regulate it have again made things worse. Much worse. The leaker’s preferred platform was Discord, which has been used to share child pornography and coordinate the white supremacist riots in Charlottesville. Discord is not alone. Recently, Instagram assisted the suicide of a young British girl by serving her images of nooses and razor blades. Facebook fueled a mob riot in Myanmar. The list goes on: Teen depression, viral misinformation, widespread distrust of national institutions, polarization, algorithms optimized for rage and radicalization … We’ve discussed this before.

What’s startling about this latest scandal is the banality. A reckless young man trying to gain social status online accidentally shapes world events. Steve Jobs called computers “bicycles for the mind” because they amplify our capabilities dramatically. It’s a nice image. A more apt analogy for many young men, however, is a Kawasaki H2 Mach IV, a motorcycle that possessed far too much power and had a rear-biased weight balance that made it an accident waiting to happen. Too much power, not enough balance, and injury is inevitable. Tech has become a bullet bike, a reliable source of disturbing accidents and organ donations.

Stupid Proof

People have always been stupid, and everyone is stupid some of the time. (Note: Professor Cipolla’s definition is people whose actions are destructive to themselves and to others.) One of society’s functions is to prevent a tragedy of the commons by building safeguards to protect us from our own stupidity. We usually call this “regulation,” a word Reagan and Thatcher made synonymous with bureaucrats and red tape. Yes, Air Traffic Control delays and the DMV are super annoying, but not crashing into another A-350 on approach to Heathrow, not suffocating as your throat swells from an allergic reaction, and being able to access the funds in your FTX account are all really awesome.

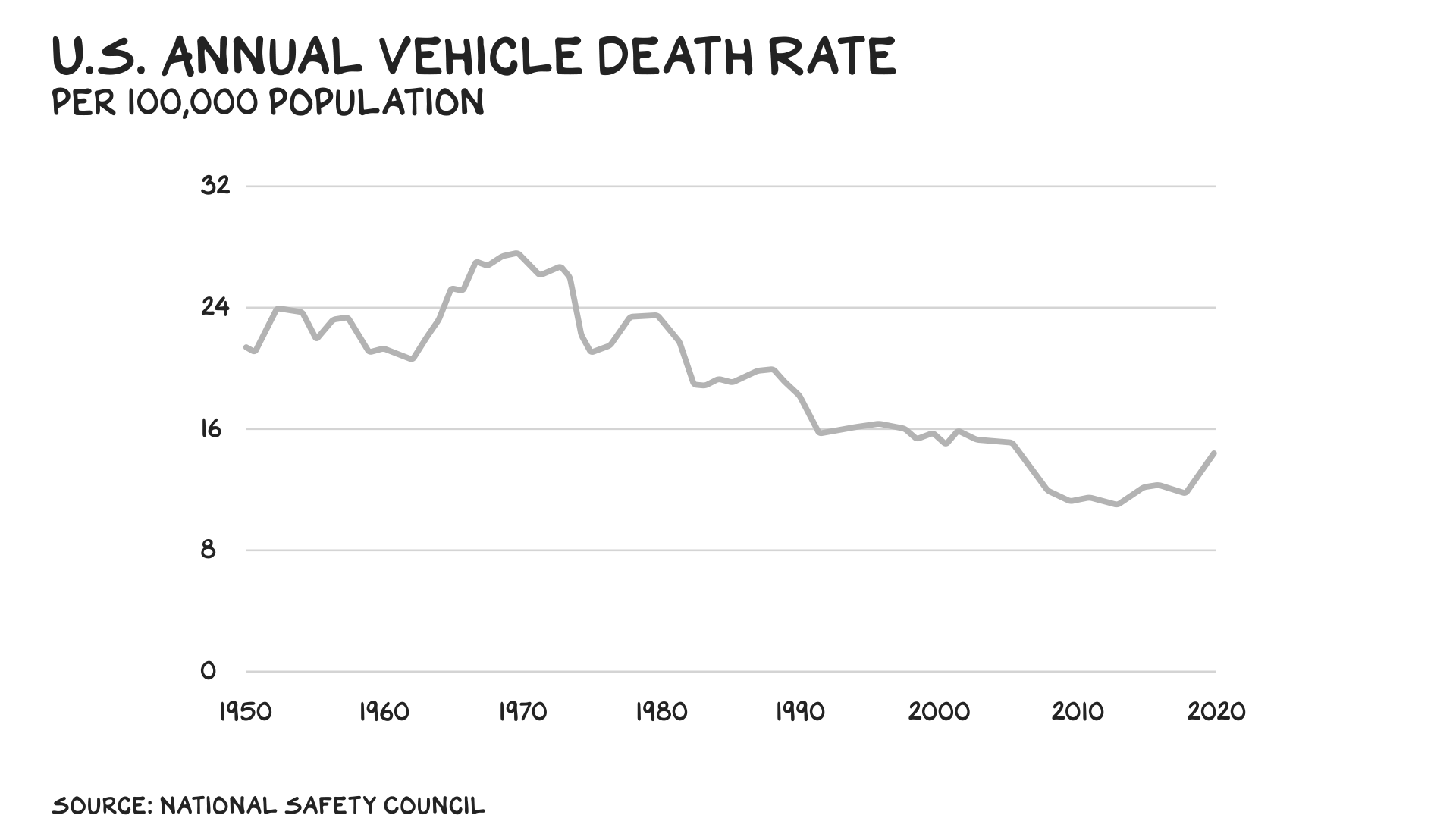

Sixty years ago the U.S. registered more than 50,000 car crash deaths annually. So we created the National Highway Traffic Safety Administration and charged it with making the roads safer. If you’re under 60, this may be hard to imagine, but not that long ago, many Americans saw seat belts as an assault on their personal liberty — some cut them out of their cars. Democracy bested stupidity, however, and between 1966 and 2021, vehicular death rates in America were halved.

The NHTSA is one of the many boring state and federal agencies critical to a healthy society. Before the Food and Drug Administration, the sale and distribution of food and pharmaceuticals was a free-for-all. The Federal Aviation Administration is the reason your chances of dying in a plane crash are 1 in 3.37 billion. Next time someone tells you they don’t trust government, ask them if they trust cars, food, pain killers, buildings, or airplanes.

The limits on innovation imposed by these agencies — their red tape — are real, and worth it. Millions of us are alive and prospering because we had the foresight and discipline to blunt the sharp end of industrial progress with the guardrails of democratic oversight. Until you open your phone …

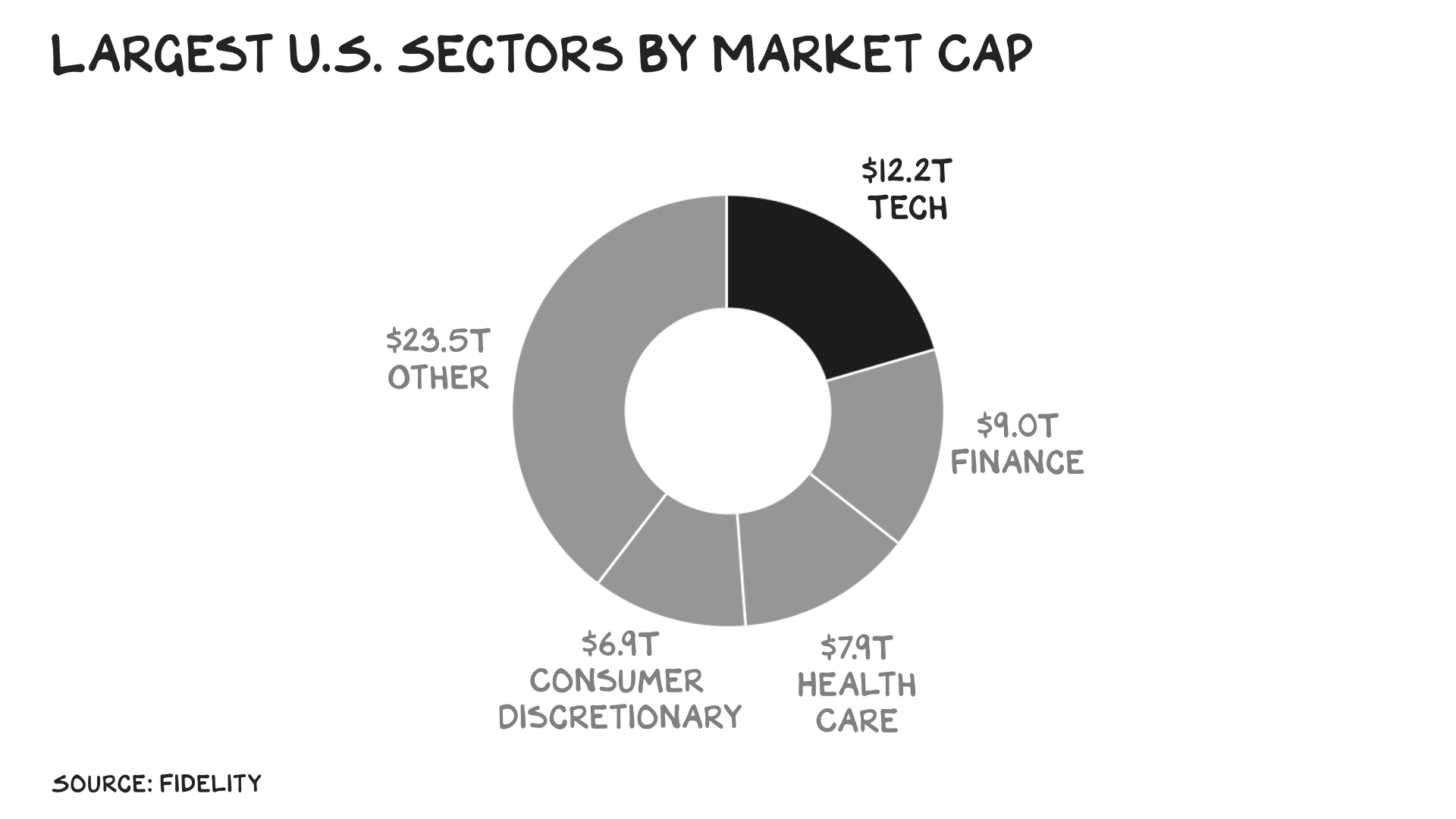

Exempt

The greatest anomaly in the history of U.S. regulation is the place more and more of us spend most of our time: online. A lethal cocktail of complexity, lobbying, cultural worship of tech leaders, and anti-government libertarian screed has rendered tech immune to the basic standards of safety and protection. Lethal is the correct term. Tech comes into the purview of other agencies on occasion. (Though it’s always bitching it’s special and shouldn’t be restrained by the olds at the FTC and DOJ.) And the industry’s blocking efforts have been effective. There is no FDA or SEC for tech, which is America’s largest sector by market capitalization and growing.

The justification for this was the go-to new-economy get-out-of-jail-free word: “innovation.” When tech was nascent and niche, we were smart to err on the side of growth vs. regulation. That movie ended a decade ago. Phones aren’t toys for early adopters, and search and social have moved beyond campuses. We don’t require a license to drive a Big Wheel, but if a Big Wheel 5G went 750 miles per hour we might restrict access, or at least demand airbags.

The go-to narrative for these platforms after every new disaster is the delusion of complexity. And that the Internet is just another communications technology (e.g., the phone, a letter), a reflection of society, and it would be near-impossible to put guardrails in place. Also, sprinkle in some blather re free speech. This is all bullshit. AI can write a Seinfeld script in the voice of Shakespeare. It can scan platforms for words and images associated with risks — they’re already doing it for signals you might be shopping for Crocs. But what’s the incentive for a platform to make the investment in any editorial review? Other than decency and regard for others, that is?

We’ve done a good job stupid-proofing the offline world, but that’s increasingly not where we live, especially younger people who now spend roughly the same amount of time online as they do sleeping. They (we) pass the majority of our waking hours riding in a vehicle with no airbags, licenses, or traffic lights. Plus, there are millions of autonomous vehicles on the road controlled by unknown actors, and they’re prone to running over pedestrians.

Operation Pause

Tech is embarking on its next big adventure: artificial intelligence. Which likely means rapid innovation, increased productivity, and another tsunami of unforeseen societal harms. Predicting how AI will tear the fabric of civilization is the new bingo. Humanoid phishing scams that access bank accounts, AI-generated “camera footage” and headlines that make the truth increasingly opaque, rogue AI gods determined to eradicate humanity. Experts agree: All of this is possible.

What’s telling is the technologists’ collective reaction to their own creation. For the first time, they want to slow down. A few weeks ago they wrote a petition calling on AI labs to “immediately pause” all training of the most powerful AI models for six months — hundreds of tech leaders signed the letter. The CEO of OpenAI, Sam Altman, who started the hype spiral with ChatGPT, says he is “scared” of his company’s own algorithms. One AI expert said the six-month moratorium isn’t harsh enough; instead, he says, “shut it all down.” What undermined the veracity of the letter was one signatory, Elon Musk, who’s asked others to pause as he fast-tracks his own AI programs. (As usual, he’s full of shit.)

Both Hands

We should grab this opportunity with both hands. Specifically, both hands on the wheel. Not a “pause,” which, in my view, is a bad idea. (China, Russia, and North Korea won’t pause.) If the guy who just disappeared my blue check hadn’t been kicked out of OpenAI, and controlled it instead, do you believe he’d be advocating for a pause? Better idea: The 78 podcasts that garner more downloads than the Prof G Pod should suspend their programming. You know … just to get our arms around this new, and potentially dangerous, podcast medium.

But we do need to seize this moment, likely brief, when some tech leaders have remembered the virtues of government oversight. We need a serious, sustained, and centralized effort at the federal level, perhaps a new cabinet-level agency, to take the lead in regulating … we can call it AI, because pretty soon AI will be everywhere in tech.

There have been efforts at comprehensive technology regulation we can pick up and carry across the finish line. For example, last year Senator Michael Bennet proposed the Digital Platform Commission Act, which would create a federal body to “provide reasonable oversight and regulation of digital platforms.” In other words: Exactly what we’re talking about. But it’s still stuck at the “introduced” stage. As with any political issue, it needs public support.

What won’t work is fake regulation — when the government issues broad, vague statements about what companies should generally do. That’s what Biden did with crypto, and he’s doing it again with AI. Specifically, his “Blueprint for an AI Bill of Rights,” which is filled with truisms, platitudes, and no laws. Similarly, the NIST published its “AI Risk Management Framework.” Again, not laws.

Psychotics and the Homeless

Earlier this month, a tech executive was tragically stabbed and died. Outspoken members of the tech industry immediately speculated the killer was a “psychotic homeless person.” A few days later, an acquaintance of the victim (also a tech entrepreneur) was arrested and charged. Note: The homeless are more likely to be victims of crime than perpetrators.

The above is another variation on a story told repeatedly across an innovation economy where we have incorrectly conflated wealth and innovation with character. A growing vein of the tech community (Venture Catastrophists) deploy weapons of mass distraction and fear to wallpaper over an inconvenient truth: The menace unleashed on America the past two decades isn’t psychotic homeless people or a crime wave, but a tech community whose products depress our teens, polarize our public and render our discourse more coarse … making it less likely we come together and address issues including homelessness and crime. Our failure to regulate this sector, as we have done with every other sector, is stupid.

Life is so rich,

P.S. This week on the Prof G Pod I spoke with Stephen Dubner, host of the Freakonomics Radio podcast. We talked Adam Smith, private equity, and the seven deadly sins — tune in.

P.P.S. Want to innovate in a way that doesn’t destroy the world? Join me for free on May 16 to discuss How to Have a Breakthrough. Come with questions.

While I agree with the premise of the problems regarding what is increasingly becoming a conglomeration of media and tech company quasi market states beholden only unto themselves, I feel that the core arguments presented are wildly generalistic to the point of ad hominem rather than dissecting what the issue is. For example tarring discord because “it can be used to send child pornography” shows a lack of understanding of what the internet as a communication medium is. Paedophiles have transmitted child pornography in the post. Do we demand that every envelope be opened, message read, picture checked by an unknown and unknowable censor “just in case”? To extend this to the statement about teen depression on the internet the obvious conclusion is that no two humans should be allowed to interact without a trusted third party on hand just in case one of them communicates information that (or in a manner that) causes a negative emotional state in the other? Obviously this is farcical.

THis is a new spark

Today I heard this presentation by the Center for Humane Technology [

https://www.youtube.com/watch?v=xoVJKj8lcNQ&t=8s ] and it made me think of your article here. I am gobsmacked at the height of the exponential curve we are already in with AI, and don’t even know what else to do but ask as many humans as possible to support a Pause – while simultaneously becoming smart about AI so we can reign in mindless tech.

We get the (lack of) regulation the industry buys. Tech had a slow rampe – but they’re catching up quick. The #1 industry segment lobbying in D.C.? Healthcare. The #2 industry? Electronics Manufacturing and Equipment – which spent $221M lobbying in 2022.

“Campaign finance reform isn’t the biggest problem facing the country – but it’s the first.” Lawrence Lessig

So interesting to me how many readers drank the deregulation Kool-Aid of the past 40 years and actually believe the BS circulating since the Reagan era that government is useless and the less we have it, the better. I understand it’s been in the air we breathe all this time, and on a good day, I sympathize. But we also can see that members on one side of the political spectrum in particular have done their best to make their belief in bad government true by breaking government and making it less effective. And EVERY TIME (forgive the hyperbole) the conservatives and libertarians have limited regulation for the banking industry (since the 80s at least), bankers commit destructive business practices and damage the economy–and primarily get off scott-free.

So I agree with Scott. Government is us, and we can do good through our collective actions. And there are plenty of us whose self-interests or whose Wall Street motivations need to be regulated for the sake of the others of us. To the best of our ability, we all need to take part in the solutions–both individually and communally.

There is already a guardrail in place which is that free societies don’t let governments control information content or flow. And you want to do away with that because of “societal harms” ? Well, we’re not infants so you’ll have to make a much better argument than that. Sorry your blue check was disappeared, but we’ve seen enough government directed misinformation around Iraq, Covid and political oppositions (by both parties), and do not want to give them more control.

I’m not anti-regulation, but this recycles the simplistic dismissal of real problems we have with agency inertia. How many hundreds of thousands in this country have died of heart disease because we were much later than Europe to approve beta blockers, or deaths of despair because we have lagged on MDMA/Psilocybin treatment for mental illness and addiction? Yeah, we don’t want airplanes crashing into one another, but the number of deaths that result from regulation preventing consenting adults from leading their own lives makes singular lapses (often due to regulatory capture!) Pale by comparison.

As always, a great read. The concept of a mandatory public service program fully fleshed out with a meaningful menu of options for those 18-20 years of age might be one way to divert some attention to the phone screen.

Take care.

Who the heck trusts our food system? Built on capitalism and food is built to travel and not for nutrition or health.

This a thoughtful and timely commentary on a critical issue with broad ramifications. It struck me that many of the opening points about self-interest, lack of appreciation for the impact of responsible regulation and ramifications of doing little or nothing could apply to gun violence. Arrogance, intentional ignorance and judicial idiocy perpetuate increasingly dangerous situations. I’ve been encouraging the media and blogs like this to introduce each mass shooting as “Another Republican-enabled assault weapon atrocity … [fill in the details]. Shall we try something similar for AI perversion?

Neoliberalism has few (if any) good answers. And it crushes good answers that resist its hegemony.

love this piece (and most of your other posts). Articulated so well. Alot of smart people are converging towards real regulations and it’s not a nice to have but a must.

Excellent points! But I can’t help thinking, somewhere, Bernie Madoff is laughing his head off (regarding “regulations’).

“What won’t work is fake regulation — when the government issues broad, vague statements about what companies should generally do.” – Ironically this statement describes almost exactly what this article, and so many other articles journalists have written as of late, have been doing. Buzzwords like “misinformation” and “harm” have become feel good platitudes meant to make people feel informed and smart without having to suggest real, tangible policies.

Thank you! A good analysis of “the problem”. I think you are right on the money when you talk about the good side of regulation and regulatory agencies. The rest needs to be openly discussed. Reagan and Thatcher saw the need to unleash and protect private initiative and not destroy government, just to un-regulate to limit the size and power of government so it wouldn’t turn against “we the people”.

Elon Musk has become a scapegoat and a caricature of big business, strong leadership and true american entrepreneurship. If you leave him out of your discussion your argument is probably going to be stronger and more bipartisan. This is an issue that concerns us all, not only in the States, but everywhere, so we need an open, mature, serious, in depth discussion. What is at stake? an awful lot!

One last thing, not only youngsters are vulnerable to the “vices” associated to “the screens”.

We need to sit down and discuss this ad nauseam.

Keep up the good work and “the search for truth wherever it may lead”!

Regards.

Thank you for this article, a wake up call for “modern society”. It will take outstanding local and global leadership to regulate AI and toxic social media consumption. Awareness starts from early age education.

Kawasaki H2?

I bet you got that here…..

https://www.webbikeworld.com/most-dangerous-motorcycles-ever-released/

I think Hayabusa would have been more accessible, or maybe a Ducati 900SS a la Hunter S. Thompson.

https://www.visordown.com/features/general/hunter-s-thompson-reviews-ducati-900ss

It pains me to write this because I’m a Scott fan but here I go.

Scott wants to rely – sigh – on government regulation to save the day here. Why not (sarcasm trigger alert for Gen Z)?

Government performed fantastically in preventing Silicon Valley Bank from tanking, inflation from raging, interest rates from skyrocketing, the pandemic from spreading, and the Great Recession from smacking down millions of homeowners 10 years ago. Yeah, let’s have more of that.

Scott says Elon is “full of sh!t”. The market – which Scott often lauds – disagrees.

Elon bet millions to fire a rocket into space this week, and it failed. He’ll try again. And again, and again, until it works. That’s what real entrepreneurs do.

What did you do this week Scott? Apart from take shots at entrepreneurs . . . . ?

the bank’s failure could have been avoided by the stress testing that was required of banks of SVB’s size until the passage of the EGRRCPA, The Economic Growth, Regulatory Relief, and Consumer Protection Act, which was signed into law by President Donald Trump in 2018.

That’s the bill that eased financial regulations such as the Dodd–Frank Wall Street Reform and Consumer Protection Act that was put in place after the financial crisis of 2007–2008.

More specifically, EGRRCPA raised the threshold from $50 billion to $250 billion under which banks are deemed “too big to fail.”

So you just want to ignore that very important piece of the puzzle? If the regulations that WERE in place had not been removed, and the proper oversight was executed, this would have been avoided. Do you also feel that helmets are unnecessary and pointless?

The Nick Fuentes incel army is just the tip of the iceberg of dudes who have been just ruined by the internet. Trying to live up to some nonexistent ideal while getting crushed by income equality. Yes, some AI regulation with teeth needs to be formulated. Also, the 70’s widow maker H2 has been supplanted by the Z H2, which in street form is reduced to only 197 HP and it handles rather quite nicely. I think that you would enjoy such a luxury. And track time.

Scott: Please end your quest to hand over free speech regulation to Pol-Crats (Politicians and their Bureaucrats). Specifically, your remedy (“regulation”) for Instagram and other platforms that do to “teenage girls” what is analytically no different than “Vogue” and other “Insecurity Magazines” of the past did: Make females just discovering that Beauty = Power feel insecure enough to buy the products that those rags hawk.

You’re not urging the regulation of paper-based rags like Vogue and other “Be Beautiful Today!” magazines. And you know why, too: Because that’s pure First Amendment territory, not to mention pure PARENTING territory (read: parents can and should regulate what their children consume).

Surely you get this — that digital platforms are no different except that they accelerate information delivery (a 15-year-old girl, just discovering that she’s not going to look like a super-model, nevertheless can now access an ocean of “prettier girl” photos and drive herself crazy in record time now).

Your real beef, btw, is with parents who simply won’t mind their kids (regulate their media usage, be it paper- or digital-based). Pol-Crats sure ain’t gonna do any better, RIGHT?

Rethink this, OK? Because your cure is worse than the disease.

Parents: Teach your children well, lest others beckon GQP crazies and other whackos to step in for you.

Kudos!

Why do you guys use so many abbreviations. Me being uninformed have to switch to Wikipedia to look them up for their meaning. I am sure that I am not the only one. As your mother’s told you..”Use your words”

WOW, what an eye opener! From what I read previously and from using common sense I knew AI would not be as beneficial as these techs touted. As you pointed out our youth is most at risk.

Your faith in government solutions is far far in excess of mine and on this I suppose we will just disagree. I’d prefer free market solutions to all of these “problems”.

Kudos!

the bank’s failure could have been avoided by the stress testing that was required of banks of SVB’s size until the passage of the EGRRCPA, The Economic Growth, Regulatory Relief, and Consumer Protection Act, which was signed into law by President Donald Trump in 2018.

That’s the bill that eased financial regulations such as the Dodd–Frank Wall Street Reform and Consumer Protection Act that was put in place after the financial crisis of 2007–2008.

More specifically, EGRRCPA raised the threshold from $50 billion to $250 billion under which banks are deemed “too big to fail.”

So you just want to ignore that very important piece of the puzzle? If the regulations that WERE in place had not been removed, and the proper oversight was executed, this would have been avoided. How much lobbying by the “free market” corporations impact our lives? There’s a time and place for everything, and just like government isn’t the answer to all things, neither is the so-called free market.

Yeah, because history is just littered with examples of how the free markets have all our best interests at heart.

So interesting about how an increase in CEO compensation accelerates future compensation and “averages”. It is the same “accelerent” that health insurance companies discovered with “UCR” charges. As the health care providers invoiced greater fees, they pushed the “average” or UCR higher and higher. Most companies no longer use the “R” which meant “reasonable”.

Dead on. I know many of the Di-called tech nobility. Yes smart but often lacking common sense. Arrogance directly tied to wealth (with plenty of exceptions).

And many other if not most were just plain lucky.

AI is existential if we don’t move quickly to get guardrails up.

children, teens and young adults are living in a fantasy world and can not decipher fake from reality. Bring back the age limits to social media platforms and please regulate these little girls who are soliciting sex by showing off their nude or almost nude bodies on their social media accounts. little kids are being given smart phones and children as young as 12 are learning what sex is and trying to emulate that. There are real and serious consequences to these billionaire apps that are available to anyone. Technology has caused new and lasting problems.

We have raised a nation of children who have not been allowed to have feelings and emotions technology has only exacerbated are ability to have real conversations. Anxiety, anger apathy are the driving forces in this country. As always, appreciate your posts!

Once a genie is out of the bottle, it’s out of the bottle. Our gov’t can’t pay attention to anything until the grift opportunity for them is clear. It isn’t clear yet. Thus, they wave hands and do nothing. Eventually, perhaps as it was with bitcoin, the existential threat to their fiat money printing Ponzi games becomes clear and they of course over react. They are amusing little monkeys. Nothing more.

I read about 5x faster than you can speak; isn’t there a no-cost voice-to-text app you can run when live recording or based on the recording?

The rich and powerful often exploit and blame the poor and reject laws that regulate their behavoir. It is a cliche that the powerful reject laws (regulations) that limit their freedom but demand protection from the consequences of their behavoir; SVB for the latest example.

A compelling and pleasurable read and deeply flawed in its logic.

Technology has amplified what already exists. Initially, with many new technology advancements, there is damage as society learns to adjust. In time, and almost if not always, society ultimately advances and is better off. If one looks through the long lens of history one would see the stunning advancements that have occurred in the last 100, 50 and 25 years.

Aggregate living standards are near all time highs. America remains great and we are living during the best of times as compared to virtually all human history.

In other words, take a collective breath and relax. Human ingenuity always eventually triumphs. Good always eventually defeats evil. Always.

Regarding regulation- Galloway trusts government far, far too much. Most of the benefits cited would eventually appear through the free market. Why? Because if people value safety, people will pay for it, rewarding those that serve their customers.

Of course, there are many reasons for government in a civil society which requires a rule of law and protection of individual rights along with a national defense.

Governments are far more dangerous to civilians than civilians are to themselves. For those that want to look it up- the term is democide.

It’s strange that you’re ignoring the many examples provided in the article showing how government absolutely does provide benefits that would not naturally occur in the free market. Your argument that industry will regulate itself to meet customer demands is pure nonsense, and is proven wrong every day. Your desire for government to play no role other than defense and law enforcement is — to use your words — “deeply flawed”.

Eric, why do you assume they would not naturally occur? There’s no basis for that assumption. Canadians (as an example) routinely pay extra for private health insurance even though they have access to free healthcare, this along with millions of other examples illustrates plainly that people will pay more for things they value when given the freedom to.

Scott committed the “post hoc, ergo propter hoc” fallacy in attributing the benefits of regulation to the regulation, i.e., cars were already getting safer before the regulations, because people want safe cars.

Ty for a common sense rebuttal.

Past performance does not guarantee future results.

Scott,

Recently, I was trying on shoes.

My salesman, a middle-aged man, was fretting about a young man in his family who was gambling online.

I quoted you and said, “no guard rails” and he said, “I’m gonna use that.”

I am building a technology to level the playing field between the large corporations and local businesses. If you feel strongly that things need to change, please reach out I would love to discuss. Always enjoy the articles and insight.

Was fortunate enough to hear Tom Friedman at our Speaker Series in St. Louis, Tuesday; not surprisingly he focused on technology, AI and the inherent dangers of deregulation.

Thanks for the warning.

I can appreciate your reference to the H2 Mach IV, Rode several back in the day. Stupid fast and handled and stopped poorly. But wild fun 50 years ago.

Musk may be F of S, but i wouldn’t net against him just yet

Scott, I always enjoy your essays. I may not always agree with your points, but you consistently make me think, and your analysis is always very insightful. This particular piece is excellent. I have a background in national security and have been thinking over the past week about how to deal with recent events, e.g. the Discord leak. As usual, you’ve given me what to think about.

Thanks, Scott. I agree.