Facebook … What To Do?

Don Draper suggested that when you don’t like what’s being said, you should change the conversation. Facebook is trying to change the conversation to the Metaverse. But we should keep our eyes on the prize, and not look stage left so the illusionist can continue to depress teens and pervert democracy stage right. In sum, nothing has changed. And something (likely many things) needs to be done to stop the most dangerous person on the planet. I first wrote the preceding sentence three years ago; it seems less novel or incendiary today.

So what would change things? We the people are not without tools, so long as the nation remains a democracy. We need only find the will to use them.

Toolbox

- Break Up. Forty percent of the venture capital invested in startups will ultimately go to Google and Facebook. The real genius of these companies is the egalitarian nature of their platforms. Everybody has to be there, yet nobody can develop a competitive advantage, as Nike did with TV and Williams-Sonoma did with catalogs. Their advertising duopoly is not a service or product that provides differentiation, but a tax levied on the entire ecosystem. The greatest tax cut in the history of small and midsize businesses would be to create more competition, lowering the rent for the companies that create two-thirds of jobs in America. That would also create space for platforms that offer trust and security as a value proposition, vs. rage and fear.

- Perp Walk. We need to restore the algebra of deterrence. The profits of engaging in wrongful activities must be subverted to punishment times the chance of getting caught. For Big Tech, the math isn’t even close. Sure, they get caught … but it doesn’t matter. Record fines amount to weeks of cash flow. Nothing will change until someone is arrested and charged.

- Identity. Identity is a potent curative for bad behavior. Anonymous handles are shots of Jägermeister for someone who’s a bad drunk. Few things have inspired more cowardice and poorer character than online anonymity. Case in counterpoint, LinkedIn: Its users post under their own names, with their photos and work histories public. Yes, some people need to be anonymous. The platforms should, and will, figure this out. Making a platform-wide policy on the edge case of a Gulf journalist documenting human rights violations is tantamount to setting Los Angeles on fire to awaken the dormant seeds of pyrophilic plants in Runyon Canyon.

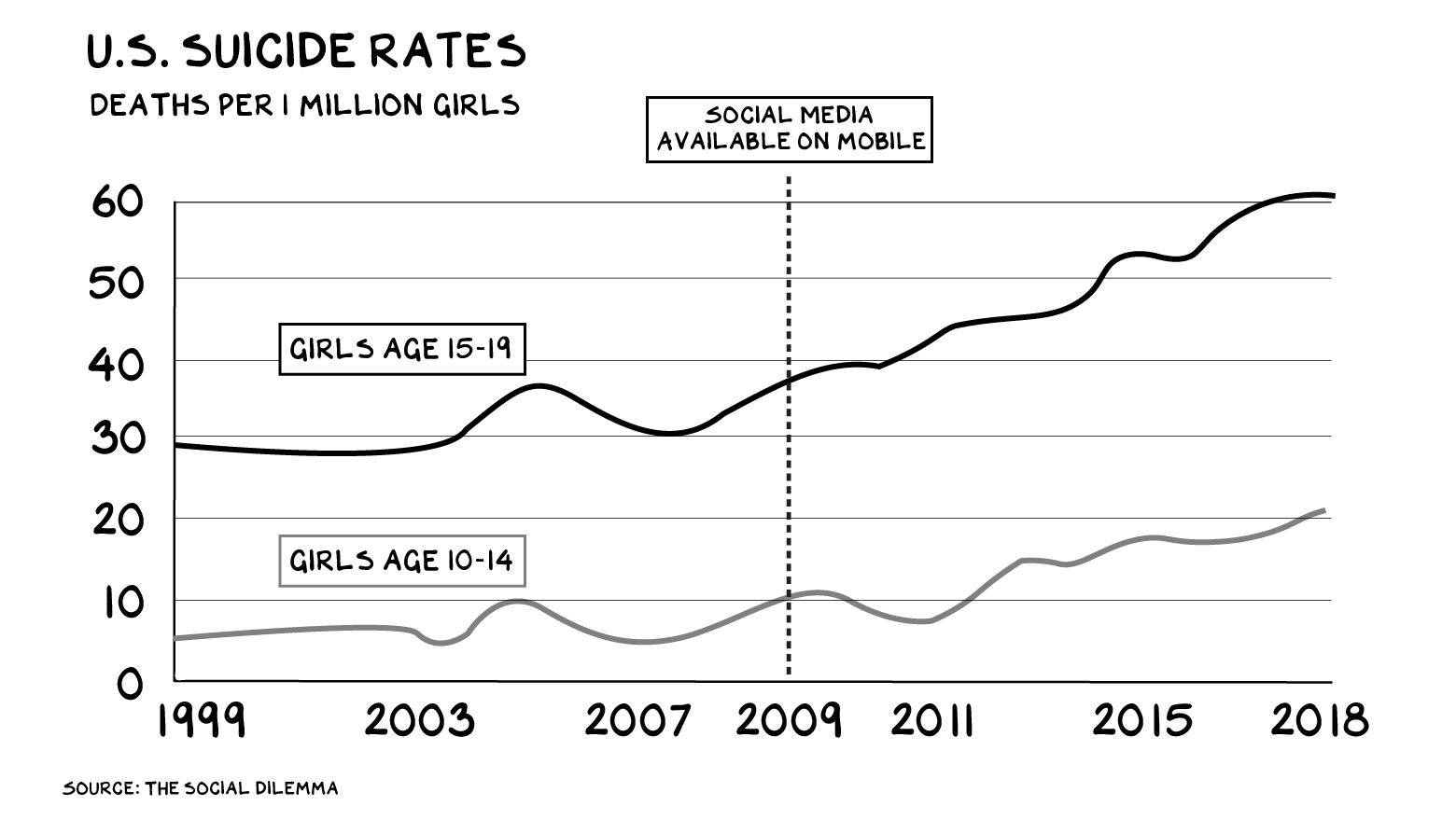

- Age Gating. My friend Brent is the strong silent type. But he said something — while we were at a Rüfüs Du Sol concert, no less — that rattled me, as it was so incisive: “Imagine facing your full self at 15.” He went on to say he’d rather give his teenage daughter a bottle of Jack and a bag of marijuana than Instagram and Snap accounts. We age-gate alcohol, driving, pornography, drugs, tobacco. But Mark and Sheryl think we should have Instagram for Kids. We will look back on this era with numerous regrets. Our biggest? How did we let this happen to our kids …

The Sword and Shield of Liability

In most industries, the most robust regulator is not a government agency, but a plaintiff’s attorney. If your factory dumps toxic chemicals in the river, you get sued. If the tires you make explode at highway speed, you get sued. Yes, it’s inefficient, but ultimately the threat of lawsuits reduces regulation; it’s a cop that covers a broad beat. Liability encourages businesses to make risk/reward calculations in ways that one-size-fits-all regulations don’t. It creates an algebra of deterrence.

Social media, however, is largely immunized from such suits. A 1996 law, known as “Section 230,” erects a fence around content that is online and provided by someone else. It means I’m not liable for the content of comments on the No Mercy website, Yelp isn’t liable for the content of its user reviews, and Facebook, well, Facebook can pretty much do whatever it wants.

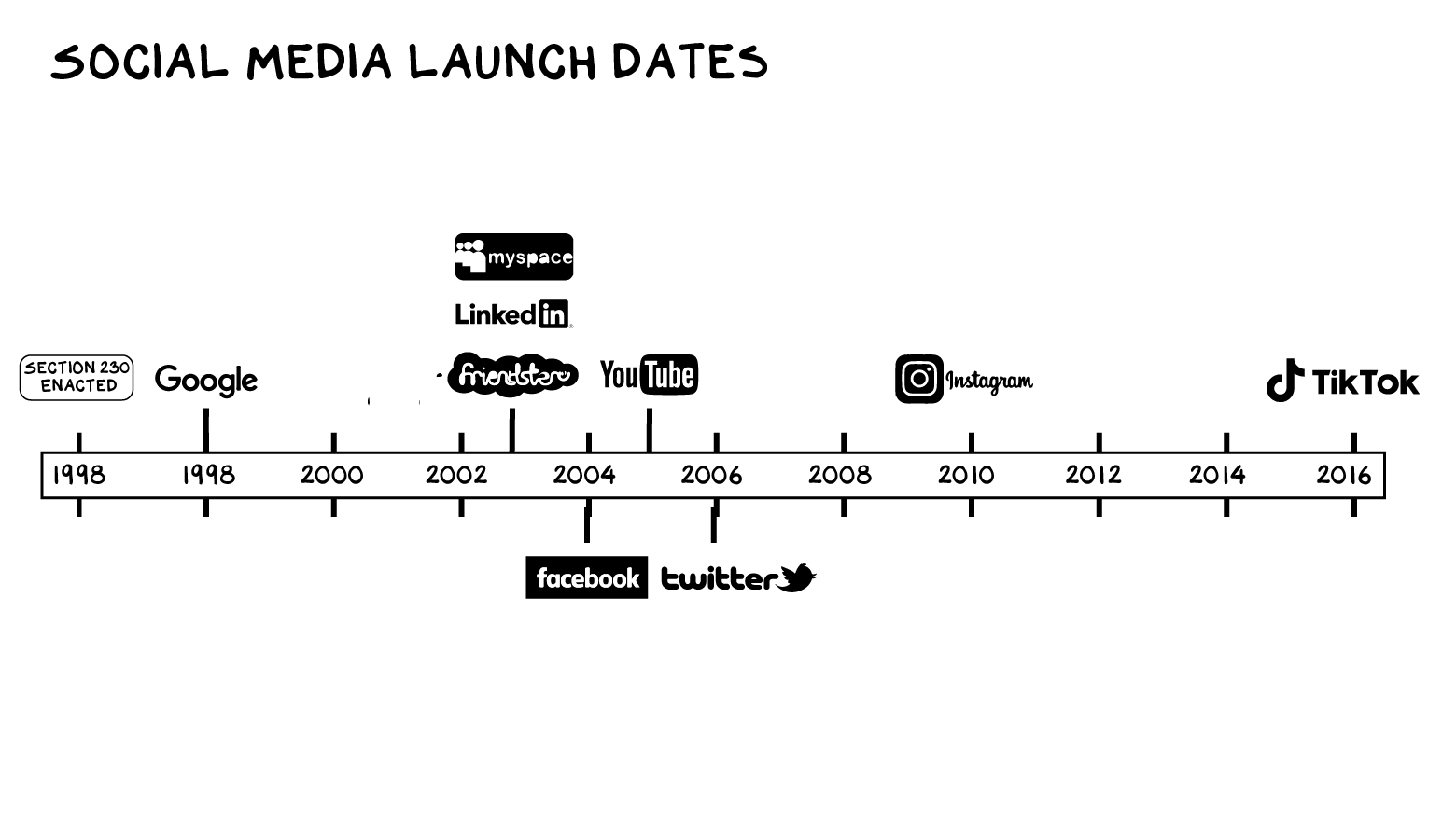

There are increasing calls to repeal or reform 230. It’s instructive to understand this law, and why it remains valuable. When Congress passed it — again, in 1996 — it reasoned online companies were like bookstores or old-fashioned bulletin boards. They were mere distribution channels for other people’s content and shouldn’t be liable for it.

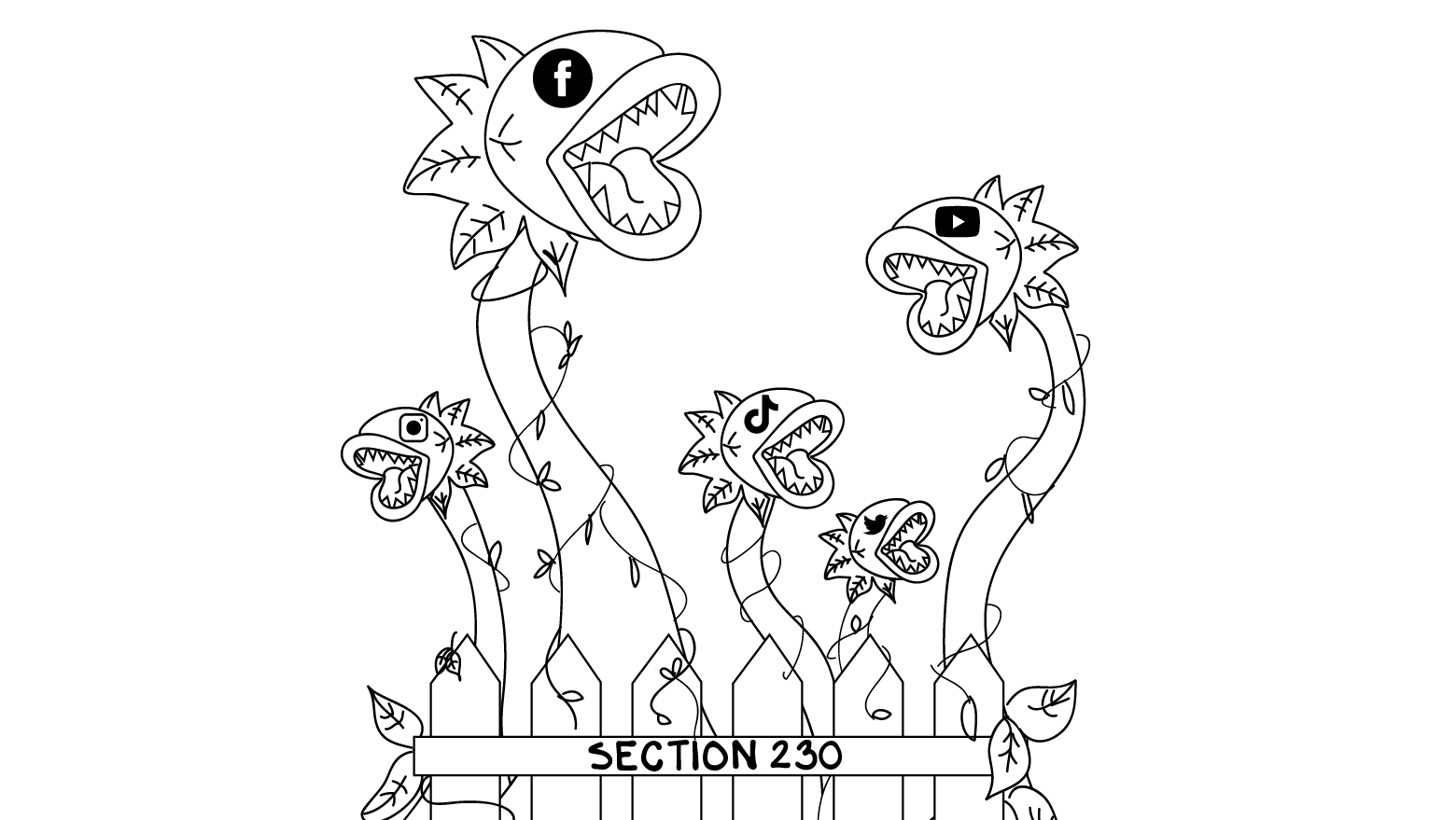

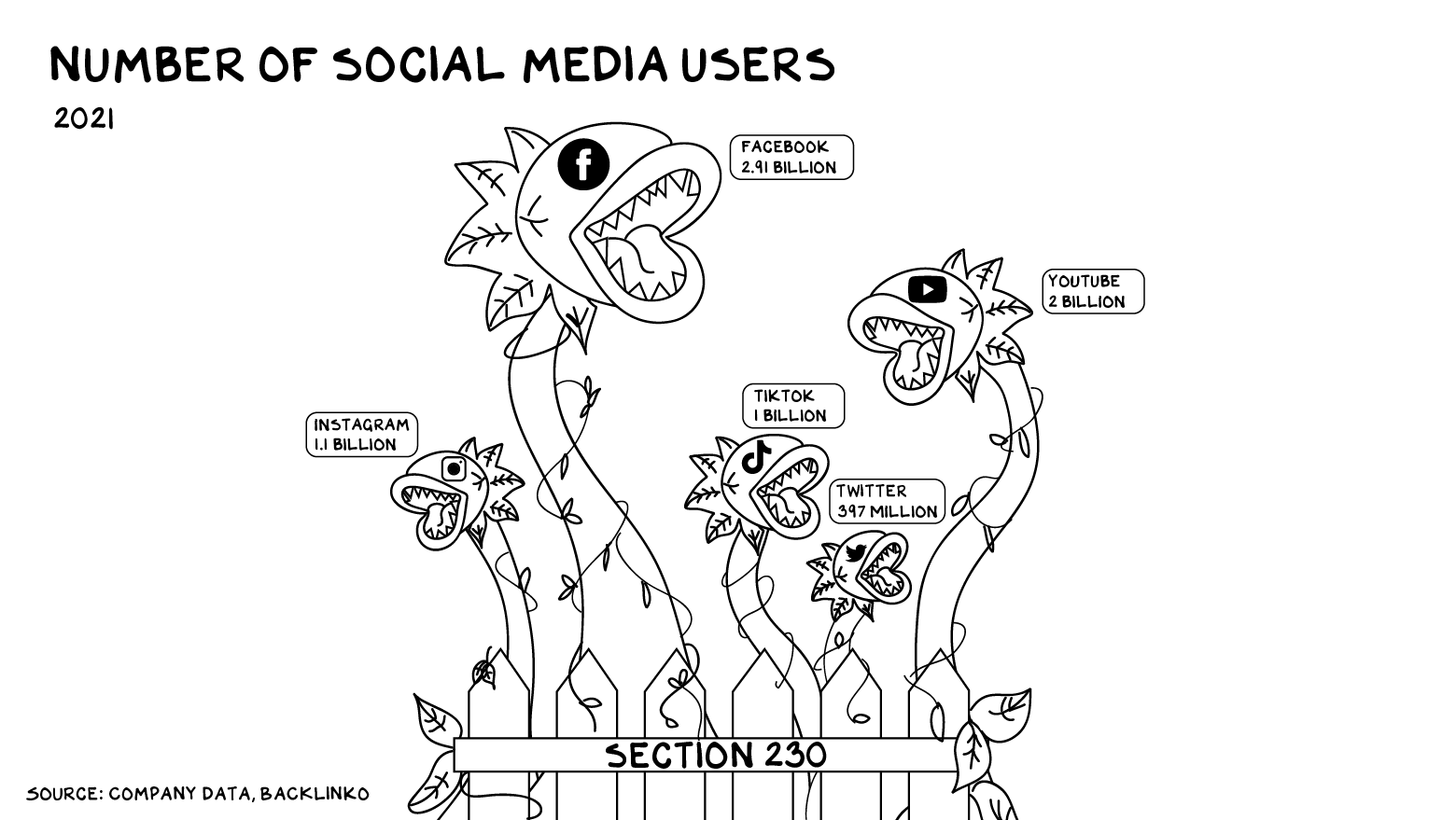

In 1996, 16% of Americans had access to the Internet, via a computer tethered to a phone cord. There was no Wi-Fi. No Google, Facebook, Twitter, Reddit, or YouTube — not even Friendster or MySpace had been birthed. Amazon sold only books. Section 230 was a fence protecting a garden plot of green shoots and untilled soil.

Today those green shoots have grown into the Amazon jungle. Social media, a category that didn’t exist in 1996, is now worth roughly $2 trillion. Facebook has almost 3 billion users on its platform. Fifty-seven percent of the world population uses social media. If the speed and scale of consumer adoption is the metric for success, then Facebook, Instagram, and TikTok are the most successful things in history.

This expansion has produced enormous stakeholder value. People can connect across borders and other traditional barriers. Once-marginalized people are forming communities. New voices speak truth to power.

If it hadn’t been for social media, you never would have seen this.

However, the externalities have grown as fast as these businesses’ revenues. Largely because of Section 230, society has borne the costs, economic and noneconomic. In sum, behind the law’s liability shield, tech platforms have morphed from Model UN members to Syria and North Korea. Only these Hermit Kingdoms have more warheads and submarines than all other nations combined.

Social media now has the resources and reach to play by the same rules as other powerful media. We need a new fence.

Unleash the Lawyers

With Section 230, the devil is very much in the details. I’ve gone through an evolution in my own thinking — I once favored outright repeal, but I’ve been schooled by people more knowledgeable than me. One of the best things about having a public profile is that when I say something foolish, I’m immediately corrected by world-class experts (and others). This is also one of the worst things about having a public profile.

I’ve struggled with Section 230, trying to parse the various reform proposals and pick through the arguments. Then, last week, I had lunch with Jeff Bewkes. He ran HBO in the 1990s and 2000s, then ascended the corporate hierarchy to become CEO of TimeWarner, overseeing not just HBO, but CNN, Warner Bros., AOL, and Time Warner Cable. In sum, Jeff understands media and stakeholder value well. Really well.

Traditional media was never perfect, but most media companies, Jeff pointed out, are responsible for harms they cause through liability to those they injure. Similar to factories that produce toxic waste or tire manufacturers whose tires explode, CNN, HBO, and the New York Times can all be sued. The First Amendment offers media companies broad protection, but the rights of people and businesses featured on their channels are also recognized.

“It made us better,” Jeff said. “It made us more responsible and the ecosystem healthier.”

But in online media, Section 230 creates an imbalance between protection and liability, so it’s no longer healthy or proportionate. How do we redraw Section 230’s outdated boundary in a way that protects society from the harms of social media companies while maintaining their economic vitality?

“Facebook and other social media companies describe themselves as neutral platforms,” Jeff said, “but their product is not a neutral presentation of user-provided content. It’s an actively managed feed, personalized for each user, and boosting some pieces exponentially more than other pieces of content.”

And that’s where Jeff struck a chord. “It’s the algorithmic amplification that’s new since Section 230 passed — these personalized feeds. That’s the source of the harm, and that’s what should be exposed to liability.”

Personalized content feeds are not mere bulletin boards. Any single video promoting extreme dieting might not pose serious risk to a viewer. But when YouTube draws a teenage girl into a never-ending spiral of ever more extreme dieting videos, the result is a loss of autonomy and an increased risk of self-harm. The personalized feed, churning day and night, linked and echoing, is a new thing altogether, a new threat beyond anything we’ve witnessed. There’s carpet bombing with traditional ordnance, and there’s the mushroom cloud above the Trinity test site.

This is algorithmic amplification, and it is what makes social media so powerful, so rich, and so dangerous. Section 230 was never intended to encompass this type of weaponry. It couldn’t have been, because none of this existed in 1996.

Redrawing the lines of this fence will require a deft hand. Merely retracting the protections of Section 230 is surgery with a chainsaw. It likely won’t take a single revision — this goes way beyond defamation, and the law will need to evolve to account for the novel means of harm levied by social media. That evolution has thus far been thwarted by Section 230’s overbroad ambit.

Supporters of the law correctly highlight that it draws a bright line, easy for courts to interpret. A reformed 230 may not be able to achieve the current level of surgical clarity, but it should narrow the gray areas of factual dispute. There are a number of bills in Congress attempting to address this, which is encouraging.

New Laws for New Media

Merely declaring “algorithms” outside the scope of Section 230 is not a realistic solution. All online content is delivered using algorithms, including this newsletter. Even a purely chronological feed is still based on an algorithm. One approach is to carve out simple algorithms including chronological ranking from more sophisticated (and potentially more manipulative) schemes. The Protecting Americans from Dangerous Algorithms Act eliminates Section 230 protection for feeds generated by means that are not “obvious, understandable, and transparent to a reasonable user.” Alternatively, the “circuit-breaker” approach would punch a hole in 230 for posts that are amplified to some specified level. Platforms could focus their moderation efforts on the fraction of posts that are amplified into millions of feeds.

But the most dangerous content isn’t necessarily widely distributed, but rather funneled alongside other dangerous content to create in essence new content — the feed. The Justice Against Malicious Algorithms Act targets the personalization of content specifically. That gets at what makes social media unique, and uniquely dangerous. Personalization is the result of conduct by the social media platform; if that’s harmful it should be subject to liability.

I’m not a lawyer, so I’m not interested in debating the legal niceties of standing or scienter requirements. Law serves policy, not the other way around, and I trust that in addition to taking on the technical details, our friends in the First Amendment legal community will join in a good faith effort at reform. That hasn’t always been the case. Anyone who begins an argument by suggesting that Facebook has anything in common with a bulletin board isn’t making a serious argument.

Some opponents of Section 230 reform would put the burden on users. Give us privacy controls, make platforms publish their algorithm, and caveat emptor. It. Won’t. Work. People couldn’t be bothered to put on seat belts until we passed laws requiring it. And there’s no multibillion-dollar profit motive driving car companies to make seat belts as uncomfortable and inconvenient as possible. The feed is the ever-improving product of a global experiment running 24/7. Soshana Zuboff said it best: “Unequal knowledge about us produces unequal power over us.” What’s required is the will to take collective action — for the commonwealth to act through force of law.

In 1996, when Section 230 was passed, it provided prudent protection for saplings, but that was a different age. In 1996, Jeff was CEO of HBO, the premier cable channel, with 30 million subscribers. Its corporate parent, Time Warner, was a 90,000-employee global colossus. His boss, Gerald Levin, was regarded as “perhaps the most powerful media executive in the world.” Meanwhile, on the Internet, the biggest brand was America Online — which had a mere 6 million subscribers and 6,000 employees. Emerging businesses, including AOL, needed the protections of Section 230, and their potential justified it.

At the end of our conversation, I asked Jeff if he’d been concerned about the implications of Section 230 or online media in 1996 — back when he was running HBO. He shook his head. “Not at all,” he said. “Not until 2000.” What happened in 2000? I asked. “AOL bought us.”

Breakups, perp walks, age gating, identity, and liability. We have the tools. Do we have the will?

Life is so rich,

P.S. Last December, I predicted Bitcoin would hit $50K — and I was right. We’ll see what I’m right about next year. My Predictions 2022 event is coming December 7 at 5pm ET. Register now.

50 Comments

Need more Scott in your life?

The Prof G Markets Pod now has a newsletter edition. Sign up here to receive it every Monday. What a thrill.

I would argue that Zuck got his manipulative data sets from Nigel Oakes, creator of PsyOps after decades of research and Beta Testing with foreign elections via traditional marketing. Zuck just gave him the platform on which to unleash his tactics.

Read all about it here: https://docs.google.com/document/d/1UY2JYKbiq5CN4PBYvf_JYKKOYVZNVpyxjmzdVjwMTUg/edit?usp=sharinghttps://docs.google.com/document/d/1UY2JYKbiq5CN4PBYvf_JYKKOYVZNVpyxjmzdVjwMTUg/edit?usp=sharing

Can you comment the talk between Zuckerberg and Hariri about changning who you are without knowing it? About 2 years ago …

And Zuboffs book about capitalism…

Just delete your Facebook account. If you’ve read this entire article and now this comment. You’ve spent enough of your life concerned about Facebook. Concern yourself no more.

Excellent summary. I’d add two other areas. 1) address the commercial model (not sure of the solution). LinkedIn has a subscription service for users. When the commercial model is that « users are the product, » we get bad things. 2) limit what tech can do with Personal Identifiable Data. The EU (and Australia) has a good starting framework with GDPR.

Facebook tried to get into travel before and I strongly believe they will soon revisit this in 2022-2023 especially given their recent announcement about Metaverse. Who controls the most trusted user-generated content and attractions/tours inventory ? Who can apply their virtual / augmented reality technology to allow users to try out some real experience before they book a tour or hotel room ? I believe TripAdvisor’s share price will continue to slump and Facebook will buy it at a bargain to step into travel and innovate the travel space which they’ll be able to monetize ad revenue on a much greater scale. Facebook will buy TripAdvisor. Why do you think the TripAdvisor CEO is stepping down ?

web 3 is the answer. Better product > force of law.

I think that the identity issue is huge. Lying and deceit may be as old as time, but social media propagates them with impunity on a massive scale. The notion that I can buy bots or create fake accounts to generate momentum and notoriety for a fringe thought is something not contemplated before social media sites were created. Comments sections already became mostly unreadable because of the idiotic and profane ideas that folks could express with false bravado while knowing there was no possibility of being held accountable for such garbage. Social media puts that on steroids because it personalizes the information and expands its visibility and reach with no greater transparency.

There are many aspects about social media that I do not like, but by far the biggest issue to me is that a fake person can implant a fake idea and give it credibility by generating fake likes for it to be spread like wildfire. Watch “Fake Famous” on Netflix to see what sheep this can make us become on light-hearted stuff, and then just extrapolate that to those with truly nefarious ambitions can do with these tools.

I’m entitled to the water that chemical manufacturers pollute. I didn’t bargain for the smoke created by smokers around me. OTOH, I’m not entitled to Facebook, it doesn’t come in my way, I use Facebook by choice. While the dangers of Facebook are well described, there’s a difference between harm caused by water pollution and passive smoking on the one side and the harm caused by Facebook on the other. Ergo, there must be a proportional difference in the culpability of polluters and tobacco companies on the one side and Facebook on the other.

Teen suicides and insurrections harm us all.

sad

Until there is a way to absolutely verify who is who then all this is bunk. There are 14 year olds out there that can beat the age-gating technology in existence. The best would be to shut it all down i but there is too much money and too many stake holders to let that happen. IMHO this stuff has destroyed a lot of what used to be our country.

Great

If you they have to verify identity, and can only use that verified identity, how would you deal with stolen identities? You could silence someone that way. Could you work around the issue of verified idenity by setting up a shell organization?

He’s right. Late to the dance, but better that than walking out saying nothing. There will still be music playing, and healthier dancers on the dance floor.

I don’t trust a single politician or academic to regulate things without censorship, i mean.. plenty of countries already have modern policy equating psychological discomfort with violence, that’s enough for me to place a hard stop on any attempts at regulation of online speech. Last U.S. election social media censored valid information on the pretext of tackling down fake news… i don’t trust anyone, we are all bias, we are all human. I rather keep the status quo than have a bunch of elites in the first world deciding that my disagreement with the idea that “there is no such thing as biological sex” is equal to violence.

If there is to be regulation, i hope its done on big companies only, (those that can afford hiring the millions of poor bastards who will need to be arbiters of social media policy and law and delete the “bad” user generated content.)

Also… i would venture to argue there is a high probability that those that argue to eliminate anonymity… have never fear the people who hold power over them, i would recommend them go to Mexico and talk about cartels online, see how long you dare to do so, or how long you last alive. The point is anonymity protect the vulnerable, and allows the orthodoxy to be challenged without consequences to a persons livelihood. Other simpler example of this is modern cancel culture, online mobs who look to impose their moralities on others shouldn’t be allowed to do so, so long as they are, so long as stating that “arson is bad” can have negative ramifications to the person making that obvious statement, then i will oppose any attempt at eliminating anonymity online.

Thanks for standing by freedom of speech principles, verifiable online identify is the worst for freedom and best for authoritarian state, Prof Galloway need to go and spend some time in an authoritarian country, which his home can quickly become if we follow what he is suggesting…

Five steps to refactor social media: https://stevenhessing.medium.com/5-steps-to-fix-social-media-3f794e9c141 . When we take back our data, we take back control; we can select our own algorithms and easily migrate our data to new and better services

Bitcoin at $50k was a no-brainer.

I recently completed a Masters Dissertation on whether the UK Government had the ability to regulate ‘Big Tech’ with an overwhelming negative response because

1. Most legislators wouldn’t understand much of your article, and I don’t think it differs in the USA watching various senate hearings.

2. The UK legal effort entitled ‘Online Harms’ is an attempt at a catch all set of laws that obviate the need to understand it is designed to regulate yesterday’s problem.

3. ‘Big Tech’ have a lock on the intellectual firepower to sidestep any laws, when they pay top dollar compared to civil servants.

4. Multiple countries trying to regulate independently will result in the mosh mash of failure that the various transnational groups have floundered in for years.

Until these issues are subject to corrective action, Zuck and his mates will remain masters of their own destiny.

I was thinking of somewhat a different approach. Regard Facebook (and similar like tiktok, youtube shorts) site as addiction and particularly similar to gambling. Enforce the sites/apps to add controls like in gambling sites.

I saw a Section4 ad on facebook today… you’re giving money to the company you just spent 2k words bashing

Scott’s acknowledged that and spoken on it before. Recently, in fact.

I’m not American, but so much of what the Prof. discusses concerning US based issues has ramifications for us all around the world, particularly the liability of social media companies and Section 230.

It’s scary to what degree our lives are connected and as a consequence, impacted by one other.

No pressure, but the world is waiting to see what you do with the Zuckerverse and his plan for world domination.

Again, an excellent and thought provoking post as are many of the comments. I very much liked the idea about individuals own their data and get paid if it is used. A complex solution but as usual, money always talks and so making this a money issue will get people’s attention in the Social Media world

Perhaps the attention should go to the founders’ partners on the ‘good’ deeds they do using their wealth. For example, Jeff Bezoz’ ex wife, Melinda Gates, 23Me, etc. There have been too much attention on the founders and they are all singing the same songs.

Perhaps we can persuade Kim Jung Ung to nuke the FB server farms.

1. Rupert Murdoch is a much bigger threat to humanity than Mark Zuckerberg — hands down.

2. Adults, parents and children need to accept responsibility for their own actions — I am the first two and have two of the third.

3. People are the problem — not platforms.

Exactly sir. This is a personal responsibility issue as well as an accountability one.

Picking Murdoch over Zuck IS the problem. We are driven to pick sides based on our base political view that we refuse to question. It would be better to not blame anyone and simply acknowledge that our eyeballs are being sold by everyone. Turn off cable news, use an ad blocker, and minimize/eliminate Social Media use. Your decision will change FB faster than any bill or law.

Not really a political statement. The Murdoch media empire (globally) is a cynical cancer that has not contributed anything positive to the world since their start in Australia. Facebook can go either way.

Murdoch or social media is like asking if we are at threat from heart disease or cancer. The answer is both. It’s been shown that social media platforms deciding what media to show can significantly alter people’s world views so 3 is nonsense. People and platforms interacting are a problem.

Great insight. Speaking to the political part of Facebook – it does remind me a bit of the frustration with Yellow Cab before Uber/Lyft. Or those complaining about newspapers and The Yellow Pages before Craigslist and Yelp. Or those complaining about airline prices before Travelocity or hotel prices before Airbnb. The way we get Facebook out of “politics” is by building a “better mousetrap!”

There are a million (unhappy) candidates out there asking people to visit their Facebook page because there is no social platform designed for campaigning. They push traffic to FB in hopes of winning votes. Then, when nothing is happening on their page, get sucked into buying targeted boosts with exaggerated data about their reach and audience. A candidate can spend $50k on FB and have little more than passing name recognition to show for it. We need to give candidates (and voters) a better platform – one build for democracy – no advertisers!

We’re in the process of building that platform and looking for investors. I’d be happy to demo any time. jim@winmyvote.com

Jim

Social media platforms and Facebook in particular are essentially only mirrors or focusing screens on a civilization dragged by chains behind a galloping technology. We know little about the insides of an iPhone. We know even less about ourselves.

Maybe the reform of 230 should be around the “republishing” of the content? As soon as you allow the forwarding, sharing or use it to enrich a content offering, you should be liable for it.

A blog post comment such as this one shouldn’t hold you responsible for it, but as soon as you share it to a larger audience (whether manually or through some algorithm), that’s crossing a clear line and thus make the amplifying entity responsible for it.

Obviously this would increase the burden disproportionately on businesses that build their product around user-generated content, and maybe that should be the point.

One of the most thoughtful examinations of the issue I’ve read. Needs to happen.

Love your idealist thinking professor. All good points IF we lived in a functioning Democracy with an equitable and functional justice system (just ask Steve Bannon). Ahh, the good old days when the US wasn’t on the cusp of being a failed state

Thanks for offering a reasonable approach to reformulating 230. Now we just need legislators who understand both tech and law, and can put preconceived biases and lobbyist white papers aside, to develop the reformulation and enforcement mechanism.

This is an excellent read. Thank you for sharing it.

Once again a well written article. And as usual I disagree with much of what you wrote.

What you got right- importantly was accountability. Users should have to be authenticated- they should not have anonymity which provides an easy path to unlawful behavior.

The basic/ simple solution is to make it FB’s responsibility to authenticate the user. If that was accomplished the user who violates the law would be held accountable by government. FB should never be required to act as the policeman because they’re not and there will always be grey areas. Of course as you logically stated people can sue when slandered.

Regarding Privacy- FB does not sell user data.

Facebook is a platform to communicate- paid for by ads. Outside of leaks/ mistakes FB does not violate privacy. FB does not sell user identities as they deliver the ads- the advertisers do not.

FB uses algorithms on your personalized feed to engage you- and makes you more likely to use their service for longer periods of time. This has evolved and is still evolving.

In America it has always been legal for business to make their product or service so good that the user wants more. Yes, if “more” is harmful we create regulations to limit that harm.

Teenagers have always had issues with fashion magazines and ads promoting the perfect body. And- we never regulated these businesses. Why? Is this really different? And would eliminating FB’s behavior really change culture in this regard?

Well stated, and thank you. Thinking of the sentence here, “…platforms that offer trust and security as a value proposition, vs. rage and fear” I ask others with this kind of knowledge to think about what platforms such as those currently exist and if not how do we create them?

Banks, Investment companies and insurance companies all offer trust and security as a value proposition. They are all heavily regulated as well. The converse of that is the virtual currency world that sells greed and fear to attract money. They operate with no regulation.

The algorithms are used to enhance the disproportionate power of the biggest internet players who generate revenue and influence through the sale of that data. Currently, all users grant the ownership of their data to the collecting company through the Terms of Use statements most people never read and can hardly understand.

There is a simple solution that will cut across ALL platforms. It declares that the CONSUMER owns the data. Whomever wants to use the data for any purpose beyond the approved interaction with the collecting party must pay the consumer for its use.

For example: A Facebook user would have a ‘payway’ relationship with Facebook which allows for the transfer of funds. Every time Facebook shares non-aggregated data with an advertiser or other third party the user would get paid a small fraction of a cent. Facebook would have a small annual fee that would be reduced and likely eliminated for a user who was reasonably active on Facebook. A user who provided no information by not posting or interacting much would have to pay the fee. An active user would actually accumulate a credit balance in their account.

In a situation where Amazon is acting as a transaction platform for a non-Amazon seller, both Amazon and the seller would be able to use the data without a fee because Amazon was acting as an agent of the seller. In the case when the sale was for a product sold by Amazon directly, only Amazon would use the data for its own business without a fee. However, if either Amazon or the seller uses the data by distributing it to a third party, or uses it outside the core business which allowed the original collection of the data, a fee would need to be paid.

In an OpenTable/Restaurant situation, OpenTable is acting as an agent of the restaurant so both OpenTable and the Restaurant would not have to pay for the data submitted by the diner (This would be explained in the terms of use). However, if OpenTable used the data for other activity beyond the reservation, they would have to pay for it’s use. If the restaurant wanted to use the data for it’s CRM system, there would be no additional cost regardless of whether it used its own CRM software or contracted with someone like SevenRooms. However, if the restaurant distributed the data to some third party for their use (like a travel company) the diner would need to be paid.

In the case of Google collecting your location information using the location services on your phone any time they sold your location information to an advertiser to direct you to local businesses, you would be paid. If they used it to order the priority of organic search listings, that would not be paid.

If Google sold the aggregated, anonymized location data to an advertiser to help them understand where customers were likely to be found, that would not be paid.

This plan sounds complicated, but could be accomplished without too much difficulty. Its implementation would doubtless create a host of businesses who would provide the infrastructure for establishing and maintaining the payway for all users as well as the corresponding approval to use. They would accumulate credits and make the money available to the user on a periodic basis.Those same companies would be policing the industry to increase their compensation. Those businesses would provide transaction information to the user so he/she could manage the use of their data. There would be an option prohibiting the use of the data so there would be no payment.

The ownership of data would be extended to ALL personal information: Demographic, Location, Medical, Financial, Consumer, Travel, images and all manner of data collected. The responsibility to pay for the sale of data would extend to any company that sold accumulated data or used it for purposes beyond the basis for the original collection of that data.

This could be implemented by a simple law passed by Congress (which would be emulated around the non-communist world):

Henceforth all personal data collected remains the property of the person who must be compensated for its use unless such uncompensated use is specifically agreed upon. Each person maintains their right to prohibit such use. Additional verbiage would define the data, compensation and usage as well as penalties for violation.

The establishment of a payway would eliminate the literally millions of troll accounts existing all over the internet because the payway process would be either too expensive or would point to the actual user behind the account by way of the money flows. This would also interfere with the current abuse of social media to influence public discourse and elections by unseen actors both foreign and domestic.

There are several obvious objections to this system:

1. One objection is that unbanked individuals would be blocked from the system. A small tax on the money passing through the payway clearing houses could be used to support the accounts of proven unbanked individuals.

2. Another objection is that users will click through sites to generate income for themselves. This will be largely prevented by the initial collection of data to be unpaid. The user only gets paid when the data collector company monetizes the data collected.

3. Another objection is that there will end up being a huge depository of potentially personal information being collected in the payway clearing houses. This is true, but that information will be available to the user for either reviewing, revising, blocking or removing. There is little doubt that currently many companies have devoted their business model to harvesting and storing information about as many individuals as possible. Those storehouses of information are opaque and inaccessible to the person linked to them. This system would provide legitimacy and transparency to the data accumulation.

4. Another objection is that small businesses will be burdened with the compliance costs. The regulations would limit the payment requirements to business until they reach a specified size in reselling data.

This bottom-up solution eliminates the need to institute top-down regulations that invariably distort the marketplace and create opportunities for abuse. This plan should have bipartisan appeal because it will resolve issues decried almost equally by both parties while leveling the playing field between the users and the internet companies.

It is time for the market to respond.

Disagree, so let’s sue brick makers for graffiti, section 230 shall stand, if not much darker powers will take over social media and inevitably change the message in their favour, we will have social media free kids in a totalitarian state, thank you but no. You suggesting to stop the progress and turn back the clock, are not going to happen, people will learn to deal with social media as they learned to not put two fingers in an AC outlet, give us some time. Will it be over then, hell no, some Galloway the third dude will be showing same scary graphs before and after the onset metaverse, and it will look just as bad or worse, but again we will deal with it when time comes as we dealt with anything that evolution been throwing at us so far (without too many lawyers involved)

Bravo! Time will sort this out. User accountability is the only thing missing.

Excellent post, Professor.

Hi Scott, I have a simple and straightforward idea. Since you have tremendous data skills, why don’t you prepare a spreadsheet for all Congress people that shows political contributions from each of the social media platforms (or 3rd party aggregators (lobbyists) by Congress person in both houses. We would get a really good idea as to why things are moving faster with regard to changing Section 230.

Good luck parsing that information. All politicians take money, as they have to to keep their jobs. Some can hide it better, though clever NGOs, lobbying name manipulation, and such. A donation directly from an oil company would be compared to 5 from fake ‘environmental’ groups in campaign ads. And, selective reporting through biased or bought off media matters. How many know that Al Gore’s family trust was one of the largest private holder of Occidental Petroleum? Since media didn’t generally report it, few.

Next, how about a post on the entire financial services industry. You know the one that takes risks with other people’s money, then when things go wrong runs to the Federal Government (and taxpayers) to bail them out. Corporate welfare.

All you have to do is check out their dating app to know they don’t give a fuck about anyone! Profiles are 90% fake and could easily be verified but they just don’t give a shit! This is a company where people are the product. It’s like the cattle at a slaughter house. Do they really care about the cows? People are just data that is sold to advertisers. I can’t even chose which friend’s posts I see. Facebook chooses for me. We desperately need something better!